Table of Contents

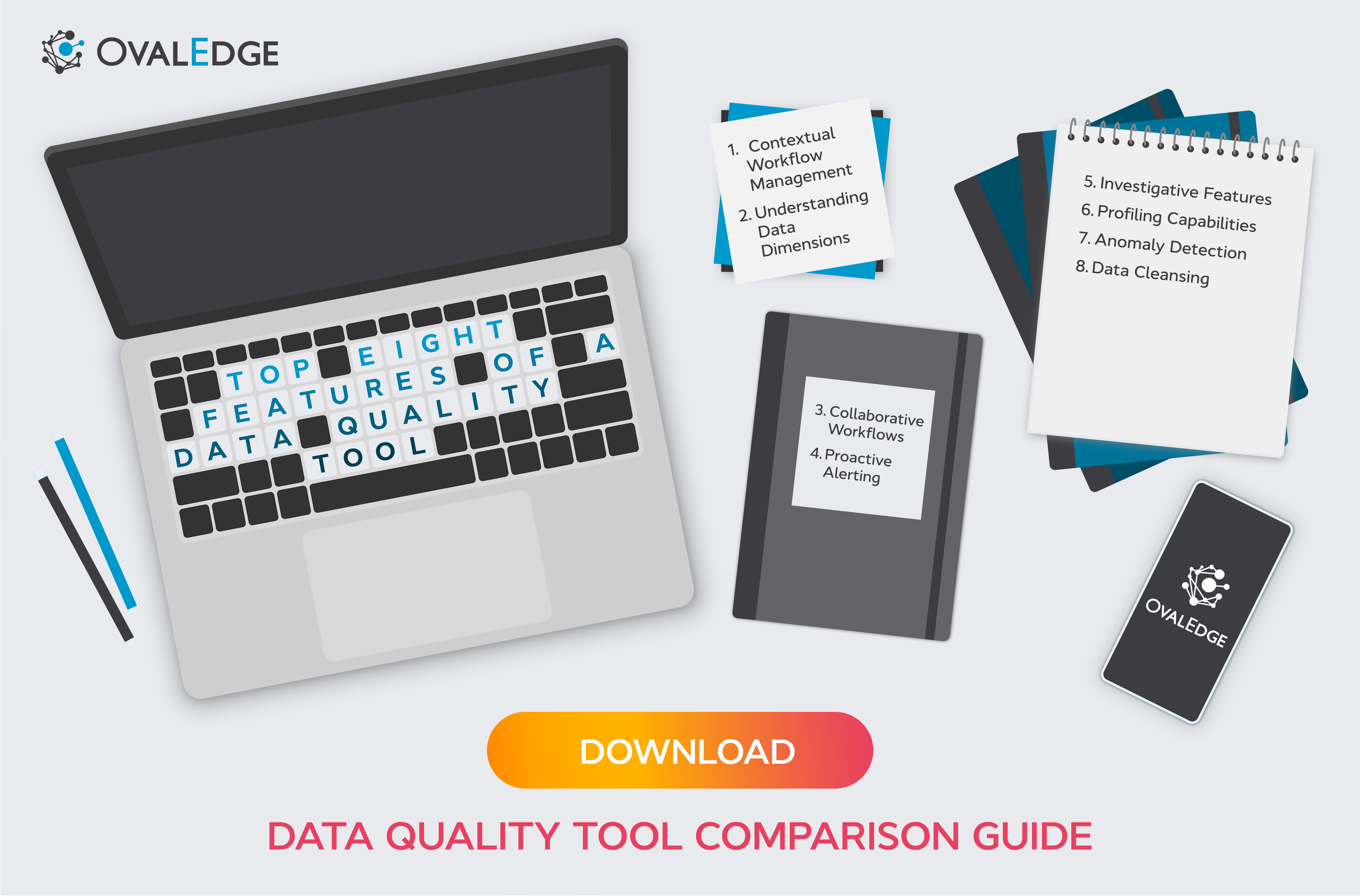

8 Essential Features Every Data Quality Tool Should Have

High-quality data is a cornerstone of data governance, but many companies still fail to implement ways to ensure it. The best way to boost data quality is with a dedicated tool. In this blog, we’ll run through the key features of a data quality tool so you can find the best fit for you.

In this guide, we break down the top data quality software features you should look for, how these tools support your broader data strategy, and why full-spectrum data quality matters for long-term governance success.

Why Data Quality Tools Matter More Than Ever

If your data isn’t high quality, it’s practically useless. Poor data quality leads to broken dashboards, misleading analytics, and business decisions that can cost organizations millions.

Most companies deal with two major types of data quality challenges:

1. Source-Level Issues

These occur when data is inaccurate, inconsistent, or poorly understood right at the point of creation.

2. Downstream Issues

When data deteriorates during transformations, migrations, or integrations often due to a lack of visibility, ownership, or monitoring.

A strong data quality tool, especially a full-spectrum solution, addresses both layers. It helps teams validate data at the source while catching and correcting issues downstream.

This is why modern data quality platforms' features are designed to support both business and IT stakeholders.

Related Post: Best Practices for Improving Data Quality

How Can a Data Quality Tool Support Your Data Strategy?

A good data strategy shouldn’t include assumptions that data manipulation is necessary. The best outcome is to ensure data quality at the source, but this isn’t always possible, and can sometimes be overly cumbersome.

That’s why you need a tool that provides both source-level and downstream capabilities.

Ensuring data quality involves navigating a complex ecosystem, where data is moved from one place to another and between numerous users and departments.

A data quality tool will help support various aspects of data quality in an organization, but to succeed, it must lead to three core outcomes:

- It must support collaboration between business and IT (Data Operations) departments.

- It must support the Data Operations team in maintaining the data pipeline and ecosystem.

- It must support data manipulation to achieve successful business outcomes.

In this blog, we'll walk you through the most critical features of a data governance tool that can tackle source-level and downstream issues and demonstrate how these features support the core outcomes we've highlighted above.

Related Post: Data Governance and Data Quality: Working Together

Top 8 Features of a Full-Spectrum Data Quality Tool

These are the features every modern organization should expect, especially when evaluating data quality software features to support enterprise-wide governance.

1. Contextual Workflow Management

A good data quality tool ensures that when an issue is reported, it automatically reaches the right owner, not just the data team. These workflows must be contextual, meaning they consider:

- The data asset affected

- The type of issue

- The responsible team

- The urgency and business impact

This ensures faster resolution and real accountability.

2. Understanding Data Dimensions

Most business users don't naturally understand what "bad data" actually means.

A strong tool educates them on critical data quality dimensions such as:

- Accuracy

- Completeness

- Consistency

- Validity

- Timeliness

- Uniqueness

- Integrity

This improves both issue reporting and decision-making. A good data quality tool will help business users understand the various dimensions of data quality so they can make better judgments on how and when to escalate issues.

3. Collaborative Workflows

A data quality tool must enable collaboration between the technical and non-technical stakeholders within the data pipeline and create a workflow between the various business entities to resolve specific issues.

It should enable business users and data operations teams to collaborate, which works both ways.

A powerful collaborative analytics platform or data quality system should eliminate silos. Business users and data operations teams must be able to:

- Flag issues

- Provide context

- Validate fixes

- Track resolution

This cross-team collaboration is essential for preventing recurring issues and ensuring governance maturity.

When either side finds an issue, they can work together to fix it. As such, the workflow engine that enables this collaboration overlaps departments, roles, and responsibilities.

4. Proactive Alerting

For Data Operations teams, proactive alerting is essential. They need to have proactive alerts in place so they can be notified immediately when a problem arises.

Proactive alerting features minimize the impact of a data quality issue because problems can be dealt with quickly before they infiltrate further into a company's data ecosystem.

5. Investigative Features

Data Operations teams must have the capacity to investigate alerts and reports by looking into data pipelines, lineage, and more.

A modern data quality tool should include:

- Pipeline tracing

- Data lineage exploration

- Dependency analysis

These investigative features allow data teams to find the source of an issue instead of patching symptoms.

6. Profiling Capabilities

Data profiling features enable users to ingest and understand the data in their ecosystem quickly and at scale.

Data profiling helps users quickly understand:

- What data exists

- How it behaves

- Whether it meets expectations

- Where outliers or inconsistencies exist

Profiling is one of the most powerful data quality platform features because it enables scale, especially when onboarding new sources.

7. AI-Enabled Anomaly Detection

With anomaly detection, technical teams can utilize AI/ML-enabled features to identify any data anomalies proactively.

This out-of-the-box solution uses intelligent automation to search for discrepancies in the data.

Manual checks can’t keep up with modern data velocity.

AI/ML-powered anomaly detection:

- Automatically scans for unusual patterns

- Flags sudden changes

- Identifies discrepancies proactively

This is critical for enterprise-level data governance.

8. Data Cleansing

From a business perspective, a data quality tool must enable organizations to correct issues with the data once they have been surfaced.

Data cleansing, or Master Data Management (MDM), provides users with the means to stay on top of data as it's ingested and fix errors with issues like duplication.

The best tools offer AI-based data cleansing tools that identify where there are errors and correct them.

Related Post: How to Manage Data Quality

The OvalEdge Solution

OvalEdge is a comprehensive data governance platform. Within OvalEdge is a set of dedicated data quality improvement tools that enable you to measure, assess, and evaluate the quality of your data and take proactive steps to fix any data quality issues on an ongoing basis.

OvalEdge provides all the capabilities, both at the source level and downstream, that you need to ensure high-quality data.

FAQs

1. What is a data quality tool?

A data quality tool is software that helps organizations measure, monitor, improve, and maintain clean, accurate, and reliable data across systems.

2. What are the most important data quality software features?

Key features include contextual workflows, data profiling, anomaly detection, proactive alerts, collaborative capabilities, lineage tracing, and cleansing tools.

3. How do I choose the right data quality platform?

Look for scalability, ease of use, workflow automation, AI-enabled detection, integrations, governance features, and support for both source-level and downstream issues.

4. How does a data quality tool improve decision-making?

Clean data leads to accurate analytics and reporting, reducing guesswork and enabling confident, data-driven decisions.

5. What is the difference between data profiling and data cleansing?

Profiling analyzes data to understand its condition. Cleansing corrects issues found during profiling or monitoring.

Utilize this free self-service tool to identify root causes of poor data quality and learn what needs fixing. Download now.

Deep-dive whitepapers on modern data governance and agentic analytics

OvalEdge Recognized as a Leader in Data Governance Solutions

.png?width=1081&height=173&name=Forrester%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

.png?width=1081&height=241&name=KC%20-%20Logo%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

Gartner, Magic Quadrant for Data and Analytics Governance Platforms, January 2025

Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

GARTNER and MAGIC QUADRANT are registered trademarks of Gartner, Inc. and/or its affiliates in the U.S. and internationally and are used herein with permission. All rights reserved.