Table of Contents

How NLP Powers AI-Driven Analytics: Use Cases, Benefits, and Implementation Steps

Natural language processing is transforming AI-driven analytics by making data easier to access, understand, and act on using everyday language. Instead of relying on dashboards or technical queries, users can explore insights through conversational questions and plain-language explanations. This shift improves analytics adoption across business teams and reduces dependency on technical users. The blog explains the key capabilities of NLP in analytics, how it enhances decision-making, and where it delivers the most value.

Most organisations are investing heavily in AI-driven analytics, yet many still struggle to turn insights into everyday decisions.

According to the 2023 PwC analysis of Forrester data, 73% of data and analytics leaders are actively building AI technologies, and 74% are already seeing a positive impact from these investments.

The challenge is not whether AI works. It is whether people across the business can reliably access and understand the insights it produces.

For many business users, analytics remains difficult to use and even harder to trust. The same report highlights a critical gap: 61% of organisations say they cannot clearly explain how their AI-powered decisions are made. When insights feel opaque or require technical expertise to retrieve, adoption slows, and decision-making becomes reactive rather than routine.

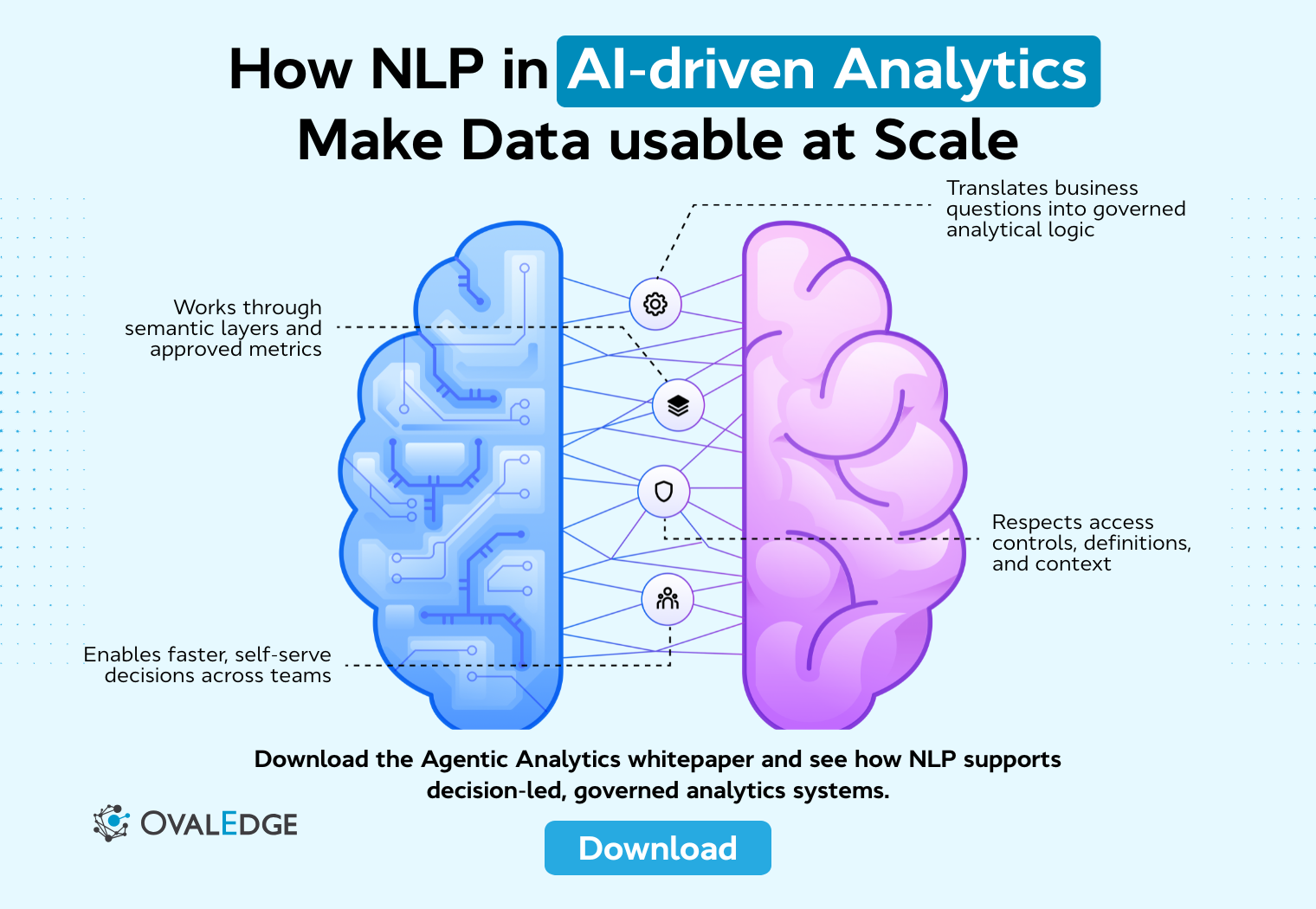

Natural language processing addresses this challenge, but not by turning analytics into a free-form chat interface sitting directly on top of data. In enterprise environments, NLP works through semantic layers, governed metadata, and approved business logic that translate natural language questions into consistent, auditable analytical queries. This approach allows users to interact with data in everyday language while maintaining accuracy, context, and control.

In this blog, we explore how NLP strengthens AI-driven analytics by improving accessibility and understanding without compromising governance..

Key aspects of NLP in AI-driven analytics

NLP brings together multiple capabilities that shape how users query, explore, and understand data in AI-driven analytics systems. These capabilities work in combination to translate human language into analytical intent, making insights easier to access, interpret, and act on across different user roles and contexts.

1. Natural language querying and data accessibility

Natural language querying allows users to interact with analytics systems using plain language instead of structured queries or complex filters. Users can ask direct questions about performance, trends, or comparisons and receive accurate responses without understanding database schemas or writing SQL.

This removes friction from data access, especially for non-technical users. By translating natural language into analytical logic, NLP makes insights available to a wider audience and reduces reliance on analysts for everyday questions. As a result, data becomes easier to explore and more embedded in daily decision-making.

|

In enterprise analytics platforms, these queries are resolved through a semantic layer that maps natural language to approved metrics, dimensions, and relationships rather than querying raw tables directly. |

2. Contextual understanding and semantic matching

Effective NLP systems go beyond keyword matching. They understand context, intent, and meaning based on how terms are used within a business. This includes recognising synonyms, abbreviations, and variations in phrasing that refer to the same metric or concept.

Semantic matching allows the analytics platform to map vague or incomplete queries to the correct data elements. For example, understanding that “sales” and “revenue” may refer to the same metric, or that “last quarter” implies a specific time range. This contextual awareness is critical for returning accurate and trustworthy results.

3. Conversational interfaces and chat-based interactions

Conversational analytics interfaces allow users to explore data through guided, context-aware interactions rather than isolated or static queries. Instead of rebuilding filters or dashboards for every question, users can refine results, request breakdowns, or explore related metrics while the system retains context and applies approved analytical definitions in the background.

These interactions feel conversational for the user, but they are governed by semantic models that ensure consistency and accuracy. By reducing the effort required to navigate dashboards or configure reports, guided conversational querying lowers cognitive load and encourages deeper exploration of insights without compromising control or trust.

4. Automated narrative generation and insight summarization

NLP can translate analytical outputs into written explanations that describe what is happening in the data. Instead of presenting only charts or tables, analytics platforms can generate narratives that explain trends, anomalies, and key changes in plain language.

These summaries help users quickly grasp the significance of the data without interpreting visuals themselves. Automated narratives are particularly valuable for executives and stakeholders who need concise explanations rather than detailed dashboards.

5. Entity recognition and intent detection in analytics

Entity recognition enables NLP systems to identify specific elements within a query, such as products, regions, customers, or time periods. At the same time, intent detection determines what the user wants to do with that information, such as comparing performance, analysing trends, or spotting anomalies.

Together, these capabilities ensure that the analytics system responds appropriately to user queries. By correctly identifying both the subject and the intent, NLP reduces misinterpretation and delivers more relevant insights.

6. Multilingual support and localization capabilities

Advanced NLP-powered analytics platforms support multiple languages and regional terminology. This allows global teams to query data in their preferred language while maintaining consistent metric definitions and governance.

Localization also accounts for regional differences in phrasing, date formats, and business terminology. By supporting multilingual access, NLP helps organisations scale analytics adoption across geographies without fragmenting data understanding.

How NLP enhances AI-driven analytics

NLP enhances AI-driven analytics by changing how people interact with data and consume insights. It removes technical barriers and introduces a more natural way to explore information.

Here are the key ways NLP improves usability, adoption, and decision-making across analytics environments.

|

Want to see how NLP fits into agentic, decision-led analytics? Download the Agentic Analytics whitepaper to explore real-world architectures and governance patterns. |

Reducing dependency on technical users and SQL

NLP reduces the need for analysts and data engineers to act as intermediaries for routine data questions. Instead of waiting for SQL queries, reports, or dashboard updates, business users can retrieve insights directly by asking questions in plain language.

This shift allows technical teams to focus on more complex analysis and data modelling, while everyday analytical needs are handled through self-serve interactions. Over time, this improves responsiveness and reduces bottlenecks across the organisation.

Enabling self-service analytics through plain language

Self-service analytics often fails when tools are difficult to learn or require specialised knowledge. NLP removes this barrier by allowing users to interact with data the same way they communicate with colleagues.

By lowering the learning curve, NLP increases confidence and adoption among non-technical users. Analytics becomes something people use regularly, not just when they have support or training.

Surfacing hidden trends through automated interpretation

NLP-powered analytics systems can automatically interpret patterns, changes, and anomalies within datasets. Instead of responding only to explicit questions, these systems can highlight notable shifts or unexpected behaviour in the data.

This automated interpretation helps users discover insights they may not have known to ask for. It also reduces the risk of important trends being overlooked due to limited time or analytical experience.

Bridging the gap between data and decision-makers

Decision-makers often need clear answers rather than raw data. NLP bridges this gap by presenting insights in simple, direct language that explains what changed and why it matters.

By translating analytical results into business-friendly explanations, NLP ensures that insights are understood and acted upon, not just generated. This makes analytics more effective at the point of decision-making.

Improving dashboard usability with voice and text input

Voice and text-based inputs make dashboards easier to use, especially in fast-paced or multitasking environments. Users can filter data, drill down into metrics, or ask follow-up questions without navigating menus or visual controls.

This flexibility improves usability and keeps users focused on interpreting insights rather than operating tools. It also supports more natural interactions across different devices and contexts.

Driving real-time alerts and proactive recommendations

NLP enhances alerts by explaining them in human language. Instead of sending raw notifications or threshold breaches, analytics systems can describe what changed, how significant it is, and what action may be required.

This context turns alerts into actionable insights. Proactive recommendations delivered in plain language help users respond faster and with greater confidence.

Good call. This will make the section far more practical without breaking the TOFU intent.

How to implement NLP in your analytics stack

Here are the key considerations for implementing NLP in your analytics stack in a way that improves usability without compromising accuracy or governance. Each step focuses on aligning natural language capabilities with real business needs, trusted data foundations, and day-to-day decision workflows.

1. Evaluating NLP use cases across business teams

Not every analytics workflow needs NLP. The highest impact comes from use cases where speed, accessibility, and simplicity matter more than deep analysis. Business teams that frequently ask similar questions or rely on analysts for quick answers are strong candidates.

Sales leaders checking pipeline health, executives reviewing performance, or operations teams monitoring daily metrics often benefit most from natural language access.

Actionable steps

-

List the top 10 recurring questions business teams ask analysts.

-

Identify which of these questions are simple, repeatable, and time-sensitive.

- Prioritise use cases where delays in insights slow down decisions.

2. Selecting NLP engines: open-source vs vendor-native

Choosing an NLP engine is a trade-off between control and ease of adoption. Open-source models offer flexibility and deeper tuning but require engineering effort. Vendor-native NLP features are faster to deploy but may limit customisation.

The decision should align with your organisation’s technical maturity, data sensitivity, and long-term analytics roadmap.

Actionable steps

-

Assess whether your team has in-house ML or NLP expertise.

-

Review data governance and compliance requirements before shortlisting options.

-

Pilot one open-source and one vendor-native option to compare accuracy and effort.

3. Integrating NLP with BI tools and data warehouses

NLP is only as reliable as the data layer it connects to. Without a governed semantic layer, natural language queries can return inconsistent results even if the model is strong.

Integration ensures that NLP uses the same definitions, metrics, and hierarchies already trusted across the organisation.

Actionable steps

-

Document core metrics, dimensions, and business definitions in a shared semantic layer.

-

Ensure NLP queries resolve through this layer rather than raw tables.

-

Test common queries across BI dashboards and NLP responses for consistency.

4. Customizing language models for domain-specific terms

Generic NLP models struggle with internal jargon, acronyms, and metric names. Customisation helps the system understand how your organisation talks about its data, which directly improves accuracy.

Without this step, users may lose trust after a few incorrect responses.

Actionable steps

-

Create a glossary of internal terms, acronyms, and metric aliases.

-

Feed historical reports, dashboards, and queries into the NLP training process.

- Regularly review misunderstood queries and update mappings accordingly.

5. Ensuring governance, data privacy, and access control

Natural language queries can feel informal, but the underlying data access must remain tightly controlled. NLP systems should enforce the same permissions as dashboards and reports.

Strong data governance prevents accidental data exposure and builds confidence among stakeholders.

For example, if a regional sales manager asks, “Show me revenue by customer for last quarter,” the NLP system will only return customers and revenue metrics permitted by that user’s role.

The query is resolved using approved revenue definitions from the semantic layer, and sensitive customer data outside the manager’s access scope remains hidden, even though the question itself is written in plain language.

Actionable steps

-

Map NLP access rules directly to existing role-based permissions.

-

Enable audit logs for all natural language queries.

- Test restricted queries using different user roles to confirm enforcement.

6. Tracking adoption, usage patterns, and user feedback

NLP success is measured by usage, not availability. Monitoring how users interact with the system helps identify friction points, unmet needs, and opportunities for improvement.

Feedback loops ensure that NLP capabilities evolve alongside real user behaviour.

Actionable steps

-

Track the most common natural language queries and failed requests.

-

Collect direct feedback from early users through short surveys or interviews.

- Use insights from usage data to refine models and expand supported queries.

AI-driven analytics vs AI-driven analytics with NLP

AI-driven analytics and NLP-enabled analytics rely on similar data foundations and machine learning models. The difference lies in how users interact with insights and how easily those insights can be accessed and understood across the organisation.

|

Aspect |

AI-driven analytics |

AI-driven analytics with NLP |

|

Primary interaction |

Dashboards, reports, and filters |

Conversational queries using plain language |

|

User skill requirement |

Requires familiarity with BI tools and data concepts |

Minimal technical knowledge required |

|

Query method |

Predefined queries or manual configuration |

Natural language questions and follow-ups |

|

Accessibility for business users |

Limited to trained users |

Broad access across roles and teams |

|

Insight exploration |

Linear and dashboard-driven |

Iterative and conversational |

|

Context handling |

Fixed definitions and filters |

Understands intent, synonyms, and context through governed semantic definitions |

|

Insight explanation |

Visuals and metrics require interpretation |

Written or verbal explanations in plain language |

|

Speed to insight |

Slower for ad hoc questions |

Faster for on-the-fly decision-making |

|

Adoption across teams |

Concentrated within analytics teams |

Distributed across business functions |

|

Role in decision-making |

Supports analysis after reporting |

Supports decisions in real time |

Challenges & pitfalls to avoid

While NLP can significantly improve how people interact with analytics, it also introduces new complexities that organisations often underestimate. Natural language is ambiguous, business context varies, and scale adds operational pressure.

Without careful design and governance, NLP-driven analytics can produce confusing results, erode trust, and limit adoption instead of accelerating it.

Ambiguity in natural language inputs

Natural language is often imprecise. Users may ask vague or incomplete questions, assuming the system understands their context. Without strong clarification mechanisms, NLP systems can misinterpret intent and return inaccurate results.

This creates confusion and reduces trust, especially if users are unaware of how queries are being interpreted behind the scenes.

How to avoid this

-

Build clarification prompts for ambiguous queries.

-

Show users how their question was interpreted before returning results.

- Encourage follow-up questions to refine intent.

Overreliance on generic NLP models

Generic, out-of-the-box NLP models lack awareness of business-specific terminology and metric definitions. Relying on them without customisation often leads to incorrect mappings and inconsistent results.

This is one of the fastest ways to lose user confidence in NLP-driven analytics.

How to avoid this

-

Fine-tune models using internal documentation and historical queries.

-

Maintain a living glossary of business terms and metric aliases.

- Regularly review failed or corrected queries to improve accuracy.

Scalability and performance in large data environments

As usage grows, NLP systems must handle high query volumes without performance degradation. Slow responses or timeouts discourage adoption, regardless of model accuracy.

Scalability issues often surface after initial success, when more teams begin relying on NLP for daily decisions.

How to avoid this

-

Stress-test NLP performance under peak usage conditions.

-

Optimise query resolution through semantic layers rather than raw data scans.

- Monitor latency and set performance benchmarks early.

Conclusion

NLP has moved from being a nice-to-have feature to a core requirement in AI-driven analytics. As analytics expands beyond data teams, the ability to interact with insights using plain language becomes critical for adoption, speed, and decision quality. NLP enables this shift by making analytics more intuitive, conversational, and accessible at scale.

However, the value of NLP depends on how well it is implemented. Strong semantic foundations, domain context, governance, and performance all play a role in determining whether NLP builds trust or creates confusion. Organisations that approach NLP as an integrated capability rather than a surface-level interface are more likely to see sustained impact.

Platforms such as OvalEdge support this approach by providing governed metadata, semantic context, and access controls that NLP-driven analytics relies on. Capabilities like askEdgi illustrate how natural language interactions can be grounded in enterprise governance, ensuring insights remain accurate, explainable, and aligned with approved business logic.

When NLP is grounded in trusted data foundations, it becomes a practical enabler of faster insights and more confident decision-making across the enterprise.

FAQs

1. What is NLP in AI-driven analytics?

NLP in AI-driven analytics enables users to ask questions and explore data using everyday language. It translates human language into analytical intent, allowing insights to be generated without relying on SQL, dashboards, or technical query structures.

2. How does NLP improve self-service analytics?

NLP improves self-service analytics by removing technical barriers to data access. Business users can retrieve insights through conversational questions, reducing dependence on analysts and enabling faster, more confident decision-making across teams.

3. Are NLP-powered analytics accurate?

NLP-powered analytics can be accurate when supported by strong semantic models, domain-specific training, and governance. Generic NLP models alone often lack business context, which can lead to misinterpretation and unreliable results.

4. Can NLP work with existing BI tools?

Yes. NLP can integrate with existing BI tools and data warehouses through a governed semantic layer. This ensures natural language queries resolve to consistent metrics, definitions, and access controls already used across the analytics stack.

5. Which teams benefit most from NLP-driven analytics?

Business users, executives, and operational teams benefit most from NLP-driven analytics. These groups often need quick, clear insights without navigating dashboards or learning technical tools, making conversational access especially valuable.

6. What are common use cases for NLP in analytics?

Common use cases include executive reporting, sales and pipeline analysis, marketing performance tracking, operational monitoring, and ad hoc business questions. NLP is most effective where users need quick answers without relying on dashboards or technical analytics expertise.

Deep-dive whitepapers on modern data governance and agentic analytics

OvalEdge Recognized as a Leader in Data Governance Solutions

.png?width=1081&height=173&name=Forrester%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

.png?width=1081&height=241&name=KC%20-%20Logo%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

Gartner, Magic Quadrant for Data and Analytics Governance Platforms, January 2025

Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

GARTNER and MAGIC QUADRANT are registered trademarks of Gartner, Inc. and/or its affiliates in the U.S. and internationally and are used herein with permission. All rights reserved.