Table of Contents

Enterprise Data Discovery Explained: Tools, Use Cases, and ROI

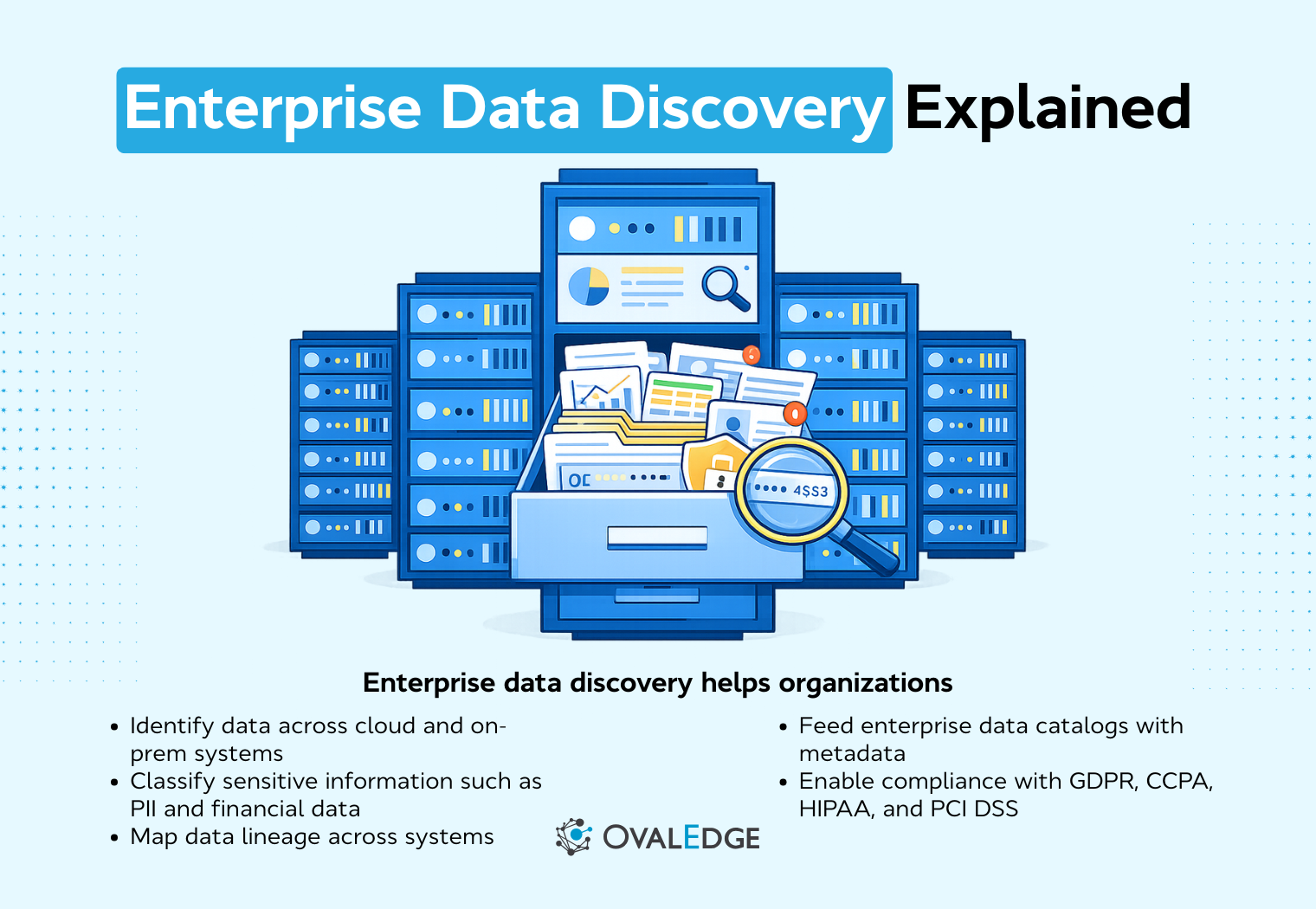

Enterprise data discovery helps organizations automatically identify, classify, and catalog data across distributed systems, improving visibility and control over enterprise data. It supports governance, compliance, and analytics by making it easier to locate sensitive, business-critical, and regulated data. Modern data discovery platforms use automation and AI to continuously scan environments, reducing manual effort and improving accuracy. With the right tools and implementation approach, enterprise data discovery becomes a foundational capability for scalable data governance and decision-making.

Most enterprises aren’t short on data. They’re short on visibility into it.

Data is scattered across cloud platforms, legacy systems, SaaS tools, and data lakes, making it difficult to track ownership, ensure compliance, or trust what’s being used for analytics and AI.

As data volumes continue to rise, governance gaps, storage costs, and compliance risks tend to grow with them.

To put this in perspective, Deloitte Insights reports that global data creation reached about 149 zettabytes in 2024 and continues to grow rapidly, underscoring the scale of the challenge organizations face in managing enterprise data effectively.

Enterprise data discovery helps address this by automatically identifying, cataloging, and classifying data across distributed environments. It gives organizations continuous visibility into their data landscape, supporting governance, compliance, analytics readiness, and smarter decision-making.

This blog explores how enterprise data discovery works, why it matters, and how to choose the right tools and implementation approach.

What is enterprise data discovery?

Enterprise data discovery is the process of automatically identifying, profiling, cataloging, and classifying data across distributed enterprise systems. It helps organizations gain visibility into their data, making it easier to locate business-critical, sensitive, and regulated data for governance, compliance, analytics, and decision-making.

Unlike traditional data discovery methods, which often rely on manual processes and limited visibility, modern enterprise data discovery leverages automation, AI-based classification, and integration with data catalogs to continuously scan and analyze data across multiple environments.

In essence, enterprise data discovery allows businesses to better understand what data they have, where it resides, and how it can be used, all while maintaining compliance with various regulatory frameworks. By automating the discovery process, organizations can not only improve their data governance but also unlock the full potential of their data for analytics, AI, and business insights.

How enterprise data discovery differs from traditional data discovery

Enterprise data discovery is fundamentally different from traditional data discovery, as it supports large-scale, automated, and continuous discovery across complex data environments. Here’s how the two compare:

-

Enterprise-scale vs department-level discovery: Traditional data discovery often focuses on departmental data and small-scale projects. In contrast, enterprise data discovery is designed to scale across the entire organization, covering data spread across various silos, environments, and systems.

-

Structured, semi-structured, and unstructured data coverage: While traditional discovery often focuses on structured data (like databases), enterprise data discovery can handle all types of data, from structured records to unstructured data like documents and emails.

-

Integration with governance and compliance frameworks: Traditional data discovery may overlook governance concerns. Enterprise data discovery, however, integrates with governance tools to ensure compliance, data lineage, and audit readiness.

-

Continuous vs one-time discovery: Traditional methods often operate on a one-time discovery basis, whereas enterprise data discovery is a continuous process, ensuring that data governance remains up-to-date and responsive to new data sources and regulatory changes.

Core components of enterprise data discovery

To understand how enterprise data discovery works, it’s important to know its core components. These key features ensure that the process is thorough, automated, and scalable:

-

Data source scanning and connectivity: This is the first step, where data sources (such as databases, cloud platforms, and SaaS applications) are scanned to identify all available data.

-

Metadata harvesting: Metadata, including technical, operational, and business metadata, is extracted from various data sources. This metadata is essential for data classification and governance.

-

Automated data classification: Using AI and machine learning, enterprise data discovery software classifies data into categories like sensitive, regulated, or business-critical, ensuring that the right policies are applied.

-

Sensitive data detection: Identifying personal or confidential data (e.g., PII, PCI, PHI) is crucial for compliance. Automated tools can pinpoint sensitive data across the organization, reducing risks.

-

Data lineage mapping: Data lineage shows the flow of data from its origin to its current state, helping organizations track data changes and ensure data integrity.

-

Policy mapping and tagging: Enterprise data discovery software applies policies and tags to data based on its classification, ensuring compliance with governance and regulatory frameworks.

-

Data ownership and stewardship assignment: Enterprise data discovery platforms assign clear data owners and stewards to specific datasets. This improves accountability, strengthens data quality management, and ensures governance policies are properly enforced across the data lifecycle.

Why enterprise data discovery is critical for modern organizations

In an era where data is one of the most valuable assets for organizations, discovering, understanding, and managing it effectively has become critical for long-term success. Without the proper tools in place, organizations face risks related to data mismanagement, regulatory non-compliance, and missed opportunities for data-driven decision-making. Here’s why enterprise data discovery is so important:

Supporting regulatory compliance and risk reduction

With the increasing number of global data protection regulations, such as GDPR, CCPA, and HIPAA, enterprises must maintain strict control over sensitive data to avoid costly fines and penalties.

Enterprise data discovery helps ensure that businesses have a comprehensive view of their sensitive data and can meet compliance requirements by automating data classification and detecting personal information across systems. This ongoing visibility allows for proactive risk management, making audit preparation smoother and more defensible.

-

GDPR, CCPA, HIPAA implications: GDPR Data discovery tools help companies identify personal data that falls under these regulations, ensuring they’re compliant with privacy laws and reducing the risk of legal repercussions.

-

Audit readiness and defensibility: By continuously monitoring and classifying data, organizations can maintain an up-to-date audit trail, making it easier to demonstrate compliance during audits.

-

Continuous sensitive data monitoring: Rather than relying on a one-time data inventory, enterprise data discovery provides continuous monitoring, so new data or changes to existing data can be quickly classified and secured.

Enabling data democratization at scale

As data becomes more critical for decision-making, organizations are increasingly focused on data democratization, making data accessible to everyone within the organization. However, to achieve this, businesses must ensure that data is trustworthy, accurate, and classified correctly.

Enterprise data discovery enables self-service analytics, giving teams the power to explore and analyze data while reducing dependency on centralized data teams. It ensures that the right people have access to the right data, empowering them to make informed decisions quickly and independently.

-

Trusted data access: Automated discovery ensures that data is properly classified and governed, reducing the risk of unauthorized or inaccurate data being used for business decisions.

-

Self-service analytics enablement: With a reliable data catalog and metadata, teams across the organization can access trusted datasets without needing to rely on IT or data engineering teams.

-

Reduced dependency on centralized data teams: As data access becomes more decentralized, businesses can free up resources for other strategic initiatives, improving overall efficiency.

Improving data quality and operational efficiency

Data quality is a cornerstone of any successful analytics initiative. Enterprise data discovery tools help businesses identify duplicate data, orphaned data, and other inconsistencies, allowing them to clean up their data and reduce unnecessary storage costs.

By automating the discovery process, organizations can ensure that data quality is consistently maintained and operational efficiency is optimized.

-

Duplicate data identification: Discovering and eliminating duplicate datasets ensures that businesses don’t waste resources on redundant data storage.

-

Orphaned data cleanup: Data discovery identifies datasets that are no longer needed or are no longer in use, which can then be archived or deleted, reducing storage costs and improving efficiency.

-

Reducing storage and infrastructure costs: By cleaning up data and eliminating unnecessary datasets, organizations can reduce their storage needs, leading to significant savings in cloud or on-prem infrastructure costs.

Enterprise data discovery architecture: how it works

Enterprise data discovery is a complex, multi-step process that combines automation, AI, and integration with other data management systems to continuously scan and classify data across a wide range of environments.

Enterprise data discovery architecture overview

At a high level, enterprise data discovery works like a continuous metadata and classification layer that sits across your entire data ecosystem.

Most architectures follow a simple flow:

Data sources → discovery engine → metadata repository → data catalog → governance and analytics systems

Here’s what each layer does:

-

Data sources: Where data lives, including databases, cloud warehouses, SaaS apps, data lakes, and file systems.

-

Discovery engine: Connects to each source, scans what’s there, and extracts metadata, classification tags, and lineage signals.

-

Metadata repository: Stores and organizes the discovery output so it can be queried, reused, and audited.

-

Data catalog: Makes discovered data searchable and understandable for humans, with context like definitions, ownership, and sensitivity.

-

Governance, compliance, and analytics systems: Use the metadata to enforce policies, support audits, and enable trusted reporting, AI, and downstream consumption.

The key idea: discovery shouldn’t end as a report. In a well-designed setup, discovery outputs become operational inputs for governance, compliance, analytics, and AI workflows.

How does enterprise data discovery architecture works

Here’s a step-by-step breakdown of how the architecture typically works:

Step 1: Data source integration

The first step in enterprise data discovery is integrating with various data sources across the organization. These can include a combination of cloud-based platforms, on-premise systems, and hybrid environments. By connecting to databases, SaaS applications, data lakes, and file systems, enterprise data discovery tools can access data wherever it resides, giving a comprehensive view of the organization’s data landscape.

-

Cloud, on-prem, hybrid: The data discovery process must account for data stored across diverse environments. Whether in the cloud, on-premises, or a hybrid model, integration ensures full visibility of all data sources.

-

Databases, SaaS apps, data lakes, file systems: This broad coverage ensures that structured, semi-structured, and unstructured data from every corner of the enterprise is included in the discovery process.

Step 2: Automated metadata extraction

Once the data sources are integrated, the next step is the automated extraction of metadata. Metadata is crucial for understanding the context of the data, such as its format, location, and relationships with other data. This metadata is collected from three key areas:

-

Technical metadata: Information about the technical properties of the data, such as schema definitions, column names, and data types.

-

Business metadata: Information that describes the business context, such as what the data represents and who the data owner is.

-

Operational metadata: Information on the data’s usage, such as when it was last accessed or modified, and by whom.

By gathering and organizing this metadata, enterprises can understand not only the data itself but also how it’s used and its importance to the business.

Step 3: AI-driven classification and tagging

Next, AI algorithms are used to classify and tag the data. These algorithms can recognize patterns, apply predefined rules, and use machine learning to classify data accurately. The classification process includes:

-

Pattern recognition: AI models recognize patterns within the data that can help in identifying categories, such as sensitive or business-critical data.

-

NLP-based semantic detection: Natural Language Processing (NLP) is used to detect and understand the meaning behind unstructured data, such as documents, emails, or text files.

-

Rule-based vs machine-learning-based classification: While rule-based methods use predefined patterns for classification, machine learning methods learn from data to classify it more accurately over time. A combination of both approaches helps ensure precision.

Step 4: Data lineage and relationship mapping

Data lineage mapping is essential for tracking the flow and transformations of data across the organization. It shows the path that data takes from its source to its current state, helping organizations understand the relationships between different datasets. Key aspects include:

-

Upstream/downstream impact: Data lineage maps help trace how changes in one dataset may affect others, which is crucial for troubleshooting and ensuring data accuracy.

-

Field-level lineage: This refers to tracking changes at the granular field level, which is essential for high-stakes data environments where every detail matters.

-

Change tracking: Keeping track of when and how data changes, along with who made the changes, provides transparency and helps identify potential issues.

Step 5: Continuous monitoring and policy enforcement

The final step is the ongoing monitoring and enforcement of data governance policies. Data discovery is not a one-time project; it's a continuous process that ensures new data is discovered and classified in real time. Automated alerts and triggers can notify stakeholders of any issues, such as new sensitive data or regulatory risks. Key components of this step include:

-

Dynamic discovery: As new data sources are added or existing data changes, enterprise data discovery tools continuously scan and update the data catalog.

-

Alerts and governance triggers: Real-time alerts help organizations stay proactive by notifying them of issues that require attention, such as the introduction of unclassified sensitive data.

-

Real-time risk detection: Continuous monitoring helps identify potential risks early, enabling faster responses to data exposure and non-compliance.

Enterprise data discovery vs traditional data discovery

When it comes to discovering and managing enterprise data, organizations face a choice between manual, traditional methods and automated enterprise data discovery. Below is a comparison to help you understand the key differences and benefits of each approach:

|

Aspect |

Traditional Data Discovery |

Enterprise Data Discovery (Automated) |

|

Process |

Manual, ad hoc processes (spreadsheets, inventories) |

Automated scanning, classification, and tagging using AI and ML |

|

Scalability |

Limited scalability for large-scale or complex data systems |

Scales seamlessly across thousands of data assets in diverse systems |

|

Data Coverage |

Typically limited to structured data and small-scale use cases |

Covers structured, semi-structured, and unstructured data across hybrid, cloud, and on-prem environments |

|

Accuracy |

Prone to human error and inconsistencies in tagging |

Reduces human error with AI-based classification and tagging |

|

Compliance & Risk Management |

Ad-hoc, difficult to track sensitive data across systems |

Continuously monitors sensitive data and ensures regulatory compliance |

|

Speed |

Slow, often one-time, manual audits |

Continuous, real-time discovery and automated updates |

|

Cost Efficiency |

High resource investment (manual labor, time) |

Reduced operational costs through automation and real-time updates |

|

Governance |

Limited governance tools, inconsistent policies |

Integrated governance features (role-based access, stewardship workflows) |

|

Automation |

No automation, entirely manual process |

Fully automated, with real-time monitoring and policy enforcement |

|

Customization |

Highly dependent on manual adjustments |

Customizable through AI models and machine learning for data classification |

When hybrid models make sense

While automated data discovery is ideal for most large enterprises, there are cases where a hybrid approach may be necessary. Here’s when to consider combining manual and automated discovery:

-

Initial discovery + ongoing automation: Perform an initial manual discovery to inventory critical data and set up governance frameworks, then automate continuous monitoring and updates.

-

High-risk domain-specific review: For especially sensitive or high-risk domains (e.g., financial data or healthcare data), manual review may be necessary for final validation, with automated discovery flagging high-risk data for review.

Enterprise data discovery vs enterprise data catalog

Enterprise data discovery and enterprise data catalog platforms are closely related, but they serve different primary roles. Confusing the two can lead to gaps in governance strategy or incomplete tool evaluations.

At a high level, discovery focuses on finding and classifying data, while the catalog focuses on organizing, governing, and enabling access to that data.

|

Dimension |

Enterprise Data Discovery |

Enterprise Data Catalog |

|

Primary role |

Identifies, profiles, and classifies data across systems |

Organizes, documents, and enables governed access to data |

|

Automation focus |

Automated scanning, sensitive data detection, metadata extraction |

Search, business metadata management, stewardship workflows |

|

Governance contribution |

Detects sensitive or regulated data and flags risk |

Applies policies, access controls, and lifecycle management |

Enterprise data discovery is responsible for building an accurate and current inventory of data assets. It detects sensitive data, extracts metadata, and maps lineage. The enterprise data catalog then provides a structured layer where that metadata is searchable, enriched with business context, and tied to governance processes.

How they work together in enterprise environments

Discovery feeds the catalog: Automated discovery pipelines continuously scan environments and push metadata, classification tags, and lineage information into the catalog.

The catalog enables governance: The catalog makes data understandable and searchable. It allows data stewards to define business terms, assign ownership, and apply policies.

Governance enforces policies: Governance workflows use discovery and catalog insights to enforce access controls, monitor compliance, and manage data lifecycle policies.

In mature enterprise environments, discovery, cataloging, and governance operate as an integrated system rather than isolated tools. Discovery ensures visibility, the catalog provides structure and usability, and governance ensures accountability and compliance.

Key features to look for in enterprise data discovery software

Selecting enterprise data discovery software comes down to how well it scales, automates classification, and integrates with governance workflows. The right platform should provide broad visibility while keeping data usable, secure, and compliant.

Broad data source connectivity

Discovery platforms should connect across cloud, on-prem, hybrid, and SaaS environments to ensure full data visibility. Strong API support also helps bring custom or legacy systems into the discovery scope without heavy engineering effort.

Advanced sensitive data detection

Effective discovery tools automatically identify sensitive data such as PII, financial records, or health information. Custom classification models help tailor detection to industry-specific regulatory and business requirements.

AI-powered metadata enrichment

AI-driven tagging improves data searchability and usability by linking technical metadata with business context. This reduces manual documentation effort and helps both technical and business users find trusted datasets faster.

Integrated governance workflows

Discovery becomes more valuable when it ties directly into governance processes like access control, stewardship, and policy enforcement. Built-in audit trails and workflows help maintain compliance and operational accountability.

Scalability and performance

Enterprise discovery platforms must handle large-scale environments without slowing operations. Distributed architecture and performance monitoring ensure continuous discovery remains efficient as data volumes grow.

When enterprises need enterprise data discovery

Enterprises usually invest in data discovery when they hit a point where teams can no longer rely on tribal knowledge or manual documentation to understand what data exists, where it lives, and whether it’s safe to use.

Here are the most common triggers:

-

Regulatory compliance pressure like GDPR, HIPAA, PCI DSS, SOX, or industry-specific audit requirements that demand clear visibility into sensitive data, retention, and access.

-

Cloud migration or modernization efforts where you need to inventory current data, understand dependencies, and avoid moving redundant, risky, or low-value datasets.

-

AI and analytics rollouts that require trustworthy datasets with clear definitions, quality signals, and context so models and dashboards don’t run on conflicting or misunderstood data.

-

Mergers and acquisitions when new business units introducing unfamiliar systems, duplicate data, and inconsistent naming or controls.

-

Governance initiatives where you need to operationalize ownership, lineage, classification, and policy enforcement across teams, tools, and environments.

Best data discovery tools for enterprises

There isn’t a single “best” enterprise data discovery platform. The right choice depends on your data environment, governance maturity, compliance needs, and analytics goals. Below is a practical breakdown of widely used platforms grouped by primary strengths.

Enterprise-grade governance platforms

1. OvalEdge

OvalEdge positions enterprise data discovery as part of a broader AI-driven governance operating model. The platform continuously scans distributed systems, identifies critical and sensitive data, maps lineage, enriches metadata with business context, and converts governance policies into enforceable controls.

Best for: Enterprises looking for AI-powered data discovery tightly integrated with governance, lineage, quality, and policy enforcement.

Key features:

-

Continuous, automated discovery across cloud, on-prem, and SaaS: Always-on scanning across the full data estate so new datasets, fields, and changes don’t slip through.

-

AI agent-driven classification and sensitive data detection: AI agents continuously scan and classify data across systems using 150+ out-of-the-box connectors, identifying sensitive data and helping assess business criticality.

-

Column-level lineage with impact analysis and change tracking: Field-level lineage to understand upstream and downstream dependencies, plus tracking of schema and pipeline changes over time.

-

Business glossary alignment and contextual metadata enrichment: Maps technical assets to business terms, owners, domains, and definitions so data is understandable, not just discoverable.

-

Integrated data quality monitoring and anomaly detection: Ongoing checks for freshness, completeness, schema drift, and unusual patterns that could break reporting or downstream models.

-

Policy enforcement workflows with exception management and audit tracking: Built-in governance actions like approvals, exceptions, evidence capture, and audit trails tied directly to discovered assets.

See how OvalEdge can help you. Book a demo now!

2. Collibra

Collibra is an enterprise governance solution built for compliance-heavy environments. Its strengths are deep governance workflows, business glossary support, and strong lineage capabilities, though complexity can be higher for early-stage programs.

-

Best for: Large enterprises with mature governance programs and strong compliance requirements.

-

Key capabilities: Deep governance workflows, business glossary integration, strong lineage, and enterprise-scale cataloging.

-

Limitations: Can feel complex for teams just starting their data governance journey.

3. Alation

Alation focuses on data cataloging with strong behavioral metadata and collaboration features. Its discovery capabilities support broader governance and analytics goals, particularly in data-driven organizations.

-

Best for: Data-driven organizations focused on catalog adoption alongside discovery.

-

Key capabilities: Strong search experience, behavioral metadata, collaboration features, and governance integration.

-

Limitations: Discovery capabilities often work best when paired with broader governance initiatives.

Cloud-native data discovery platforms

4. Microsoft Purview

Microsoft Purview is designed to help organizations discover, classify, and manage data across on-premises, multicloud, and SaaS environments. It provides centralized cataloging, sensitivity labeling, lineage tracking, and policy enforcement to support regulatory compliance and trusted analytics.

-

Best for: Enterprises heavily invested in the Microsoft Azure ecosystem.

-

Key capabilities: Native cloud integration, automated scanning, lineage mapping, and unified governance across Azure data services.

-

Limitations: Works best within Microsoft-centric environments.

5. Atlan

Atlan is a cloud-native platform that emphasizes collaborative metadata management and active discovery. It integrates with modern data stacks and suits teams focused on self-service analytics and agile governance.

-

Best for: Cloud-first teams wanting a modern, collaborative data workspace.

-

Key capabilities: Automated metadata ingestion, active metadata approach, collaboration features, and strong integrations with modern data stacks.

-

Limitations: Some advanced governance capabilities may require additional configuration.

Compliance-driven discovery solutions

6. Informatica

Informatica is a comprehensive data management suite with strong discovery, data quality, and governance components. It performs well in large-scale environments with complex compliance requirements.

-

Best for: Enterprises prioritizing large-scale data management and regulatory compliance.

-

Key capabilities: Strong automated discovery, data quality integration, lineage tracking, and enterprise-grade governance tooling.

-

Limitations: Deployment and licensing complexity can be higher than newer cloud-native platforms.

7. BigID

BigID prioritizes sensitive data discovery and privacy risk analysis. It is especially useful for organizations focused on regulatory reporting, data protection, and risk monitoring.

-

Best for: Privacy, security, and compliance-focused organizations.

-

Key capabilities: Advanced sensitive data discovery, privacy risk analysis, regulatory reporting, and data security insights.

-

Limitations: Primarily compliance-focused rather than broad analytics enablement.

|

Pro Tip: For a more detailed comparison, read the top 10 Data Discovery Tools guide. |

Enterprise data discovery implementation framework

Implementing enterprise data discovery works best as a phased initiative rather than a large one-time rollout. A structured approach helps organizations minimize disruption, build stakeholder trust, and gradually expand coverage across the data ecosystem.

Phase 1: Define scope and governance objectives

Start by aligning discovery goals with compliance requirements, risk priorities, and business outcomes. Clear stakeholder alignment early prevents confusion around ownership, policies, and success metrics.

Phase 2: Identify critical data domains

Focus first on high-impact domains such as customer, financial, or operational data. Prioritizing critical datasets helps demonstrate early value while reducing compliance and operational risks.

Phase 3: Deploy automated scanning

Begin with a pilot covering selected data sources to validate classification accuracy and metadata quality. A phased onboarding strategy ensures stable performance as more sources are added.

Phase 4: Integrate with enterprise data catalog and governance workflows

Discovery becomes operational when metadata flows into cataloging and governance processes. Policy mapping, access controls, and stewardship assignments help convert visibility into actionable governance.

Phase 5: Monitor, measure, and optimize

Track KPIs like classification coverage, discovery accuracy, and policy compliance. Continuous tuning reduces false positives and ensures discovery remains effective as data environments evolve.

Common enterprise data discovery challenges and how to overcome them

Even with the right data discovery platform, implementation is rarely frictionless. Enterprise environments are complex, distributed, and often siloed. Anticipating common challenges helps organizations design a smoother rollout and long-term operating model.

Data sprawl across hybrid environments

Modern enterprises store data across multiple clouds, on-prem systems, SaaS applications, and shadow IT tools. A centralized discovery engine with broad connectivity ensures consistent visibility across environments without relying on fragmented inventories.

False positives in sensitive data detection

Automated classification can sometimes flag non-sensitive data as regulated information. Fine-tuning detection rules, training custom classifiers, and incorporating domain expertise significantly improve accuracy over time.

Resistance from data owners

Business units may hesitate to expose datasets due to control or compliance concerns. Clear role definitions, transparent governance policies, and automated stewardship workflows help build trust and accountability.

Performance bottlenecks

Large-scale scanning can affect system performance if not managed properly. Incremental scanning strategies and distributed architecture minimize disruption while maintaining continuous discovery.

Enterprise data discovery use cases

Enterprise data discovery shows its value most clearly in real operational scenarios. From compliance to cloud migration and AI readiness, organizations use discovery to gain visibility, reduce risk, and improve decision-making across their data ecosystem.

1. PII and sensitive data discovery for compliance

Organizations use discovery tools to identify where personal, financial, or health data resides so they can meet regulatory requirements. For example, a financial services firm preparing for a privacy audit might automatically locate customer data scattered across CRM systems, file storage, and analytics platforms.

2. M&A data consolidation

During mergers or acquisitions, companies often inherit unfamiliar data environments. Discovery helps quickly map data assets, assess risks, and identify redundant systems, like when a newly acquired business brings overlapping customer databases that need rationalization.

3. Cloud migration readiness

Before moving workloads to the cloud, enterprises need clarity on what data exists and how sensitive it is. A retailer migrating legacy systems might use discovery to classify payment data and ensure proper security controls before migration.

4. AI readiness and data preparation

AI initiatives depend on high-quality, well-understood datasets. Discovery helps teams locate reliable training data, validate lineage, and ensure compliance before building machine learning models, reducing delays in AI projects.

5. Risk assessment and audit preparation

Discovery platforms help organizations maintain ongoing audit readiness by continuously classifying data and mapping lineage. For instance, a healthcare provider can quickly generate compliance reports when regulators request evidence of how patient data is managed.

Measuring ROI of enterprise data discovery

Enterprise data discovery ROI is typically measured across risk reduction, analytics acceleration, storage optimization, and audit efficiency. The most meaningful metrics connect discovery outcomes to compliance posture, operational productivity, and infrastructure cost savings.

1. Risk reduction metrics

Organizations often track the percentage of sensitive data identified and classified across systems. Additional indicators include reduced policy violations, fewer unmonitored data sources, and faster remediation when high-risk data exposure is detected.

2. Time-to-insight improvements

Discovery reduces the time required to locate trusted datasets for analytics or AI initiatives. Teams also see less manual documentation effort and faster onboarding of datasets into BI tools, which shortens data preparation cycles.

3. Storage cost optimization

Duplicate or unused datasets frequently surface during discovery initiatives. Archiving stale data and improving lifecycle management can reduce premium storage usage and optimize infrastructure costs.

4. Compliance audit time reduction

Automated classification, lineage tracking, and reporting simplify audit preparation. Organizations typically experience faster response times to regulatory requests and reduced manual effort during compliance reviews.

Conclusion

Enterprise data discovery is no longer just about scanning systems and building inventories. In modern enterprises, it becomes the control layer that connects visibility, governance, data quality, and compliance into a single operating model.

When powered by AI-driven automation, discovery does more than identify datasets. It pinpoints critical data based on usage and impact, detects and tracks sensitive information at the column level, builds lineage automatically, and highlights downstream risks before they disrupt dashboards or models. It continuously monitors data quality, surfaces recurring issues, and turns written policies into enforceable, auditable controls across systems.

At the same time, humans remain central. Data owners confirm critical assignments. Stewards validate classifications and edge cases. Governance leaders approve remediation and compliance actions. The result is a practical division of labor: automation handles scale and complexity, while people provide judgment and accountability.

Organizations that treat enterprise data discovery as an always-on capability, rather than a one-time project, move faster with trusted data. They reduce compliance exposure, prevent inconsistent metrics, control data quality debt, and give analytics and AI teams reliable foundations to build on.

In short, enterprise data discovery becomes the engine that keeps governance operational, compliance continuous, and data genuinely usable at scale.

FAQs

1. How do you calculate ROI for enterprise data discovery?

Organizations calculate ROI by comparing implementation and operating costs against measurable outcomes such as reduced compliance exposure, lower storage expenses, improved analyst productivity, and faster audit preparation. Establishing baseline metrics before deployment makes it easier to quantify operational and financial improvements.

2. What KPIs should enterprises track for data discovery ROI?

Common KPIs include percentage of classified data assets, reduction in unidentified sensitive data, average time to locate datasets, audit preparation time, storage utilization rates, and number of policy violations detected and resolved.

3. How long does it take to see ROI from enterprise data discovery?

Many organizations begin seeing operational efficiency gains within a few months, especially in compliance reporting and data accessibility. Full ROI depends on integration with governance workflows, automation maturity, and cross-functional adoption.

4. Does enterprise data discovery reduce regulatory fines?

Enterprise data discovery does not eliminate regulatory risk, but it significantly lowers exposure by continuously identifying and monitoring sensitive data. This improves defensibility and reduces the likelihood of compliance violations that can lead to penalties.

5. Can enterprise data discovery lower cloud infrastructure costs?

Yes. By identifying duplicate, unused, or low-value datasets, organizations can enforce retention policies, optimize storage tiers, and reduce unnecessary cloud spending across hybrid and multi-cloud environments.

6. Is ROI higher with automated data discovery compared to manual methods?

Automated data discovery generally delivers higher ROI because it scales across systems, reduces human error, supports continuous monitoring, and integrates directly with enterprise data catalogs and governance platforms.

Deep-dive whitepapers on modern data governance and agentic analytics

OvalEdge Recognized as a Leader in Data Governance Solutions

.png?width=1081&height=173&name=Forrester%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

.png?width=1081&height=241&name=KC%20-%20Logo%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

Gartner, Magic Quadrant for Data and Analytics Governance Platforms, January 2025

Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

GARTNER and MAGIC QUADRANT are registered trademarks of Gartner, Inc. and/or its affiliates in the U.S. and internationally and are used herein with permission. All rights reserved.