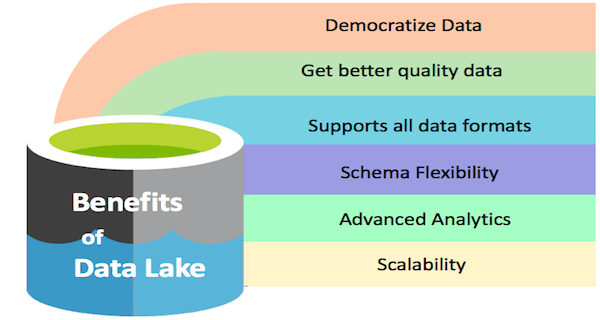

Benefits of a data lake

The key benefit of a data lake is that you can store any and all data in one place incurring a low cost, pulling it as analytical needs arise. But what can an organization gain from all of this data in one place? Let’s see!

Benefits to the business

In a company, we need to make decisions based on data all the time. We need the data of the whole group to get a holistic picture and make sound business decisions.

Democratize data

A data lake can make data available to the whole organization. It is what we call data democratization. Currently, only the top executives have the luxury to ask various departments for reports, get a sense of things from those and then make a decision. But what about the middle management and others? They don’t have the luxury to ask for all kinds of data they need from other departments. Even if they eventually get the data, it will cost time.

We know if we take a lot of time in making decisions, it can render the whole exercise futile. With necessary data readily available, all can take viable decisions at their level. For example, the Janitorial staff of a unit can decide what supplies to buy based on the price of the supplies and their needs. A real-world example of enabling everyone to make their own decisions is LinkedIn. On LinkedIn, everyone decides whom they want to connect with, what content they want to see. More benefits are listed below.

Get better quality data

With the tremendous processing power of a data lake, one can use tools to ensure the data is of good quality.

Technological benefits

Data storage in native format

A data lake eliminates the need for data modeling at the time of ingestion. We can do it at the time of finding and exploring data for analytics. It offers unmatched flexibility to ask any business or domain questions and to glean insights.

Scalability

It offers scalability and is relatively inexpensive compared to a traditional data warehouse when we take scalability into account.

Versatility

A data lake can store multi-structured data from diverse sources. In simple words, a data lake can store logs, XML, multimedia, sensor data, binary, social data, chat, and people data.

Schema fexibility

Traditionally schema necessitates the data to be in a specific format. For OLTP (Application Data), this is great as it validates data before entry. But for analytics, it’s an obstruction as we want to analyze data as is. Traditional data warehouse products are schema-based. But Hadoop data lake allows you to be schema-free, or you can define multiple schemas for the same data. In short, it enables you to decouple schema from data, which is excellent for analytics.

Supports not only SQL but more languages

Traditional data-warehouse technology mostly supports SQL, which is suitable for simple analytics but for advanced use cases, we need more ways to analyze data. A data lake provides various options and language support for analysis. It has Hive/Impala/Hawq which supports SQL but also has features for more advanced needs. For example, to analyze the data in a flow, you can use PIG, or to do machine learning you can use Spark MLlib.

Advanced analytics

Unlike a data warehouse, a data lake excels at utilizing the availability of large quantities of coherent data along with deep learning algorithms. It helps in real-time decision analytics.

|

What you should do now

|

Find your edge now. See how OvalEdge works.

OvalEdge Recognized as a Leader in Data Governance Solutions

.png?width=1081&height=173&name=Forrester%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

.png?width=1081&height=241&name=KC%20-%20Logo%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

Gartner, Magic Quadrant for Data and Analytics Governance Platforms, January 2025

Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

GARTNER and MAGIC QUADRANT are registered trademarks of Gartner, Inc. and/or its affiliates in the U.S. and internationally and are used herein with permission. All rights reserved.