Table of Contents

How to Build and Scale a Trusted AI Governance Framework

Organizations are scaling AI rapidly, but governance often lags behind, creating risks in decision-making and compliance. This blog explains how a trusted AI governance framework brings structure through clear ownership, lifecycle oversight, and traceability. It breaks down key pillars, frameworks, and real challenges that organizations face when operationalizing governance. Readers will gain practical insights to build scalable, responsible AI systems that balance innovation with control.

A data and risk team sat in a review meeting after a recent AI rollout, trying to understand why two systems produced conflicting outputs for similar decisions. Each team had followed its own process, used different datasets, and documented decisions differently. No one had intended to create confusion, but without a shared governance structure, inconsistency became inevitable.

This pattern is becoming more common as AI adoption accelerates across enterprises.

According to McKinsey’s State of AI report 2025, 71 percent of organizations reported using generative AI in at least one business function in 2025.

As usage expands, the need for consistency, accountability, and oversight becomes harder to ignore.

When building a trusted AI governance framework, similar priorities often need to be balanced. There is a need to enable teams to move quickly while also maintaining clear controls, transparency, and trust in outcomes.

This guide outlines how to structure a responsible AI governance model, how leading frameworks align, and how organizations can scale governance without slowing innovation.

Building trust in AI starts with governance

Trust in AI depends on how consistently decisions are governed across the lifecycle, from development to deployment and monitoring. Governance provides the structure that ensures models follow defined standards, ownership, and controls across teams and systems.

Definition and purpose

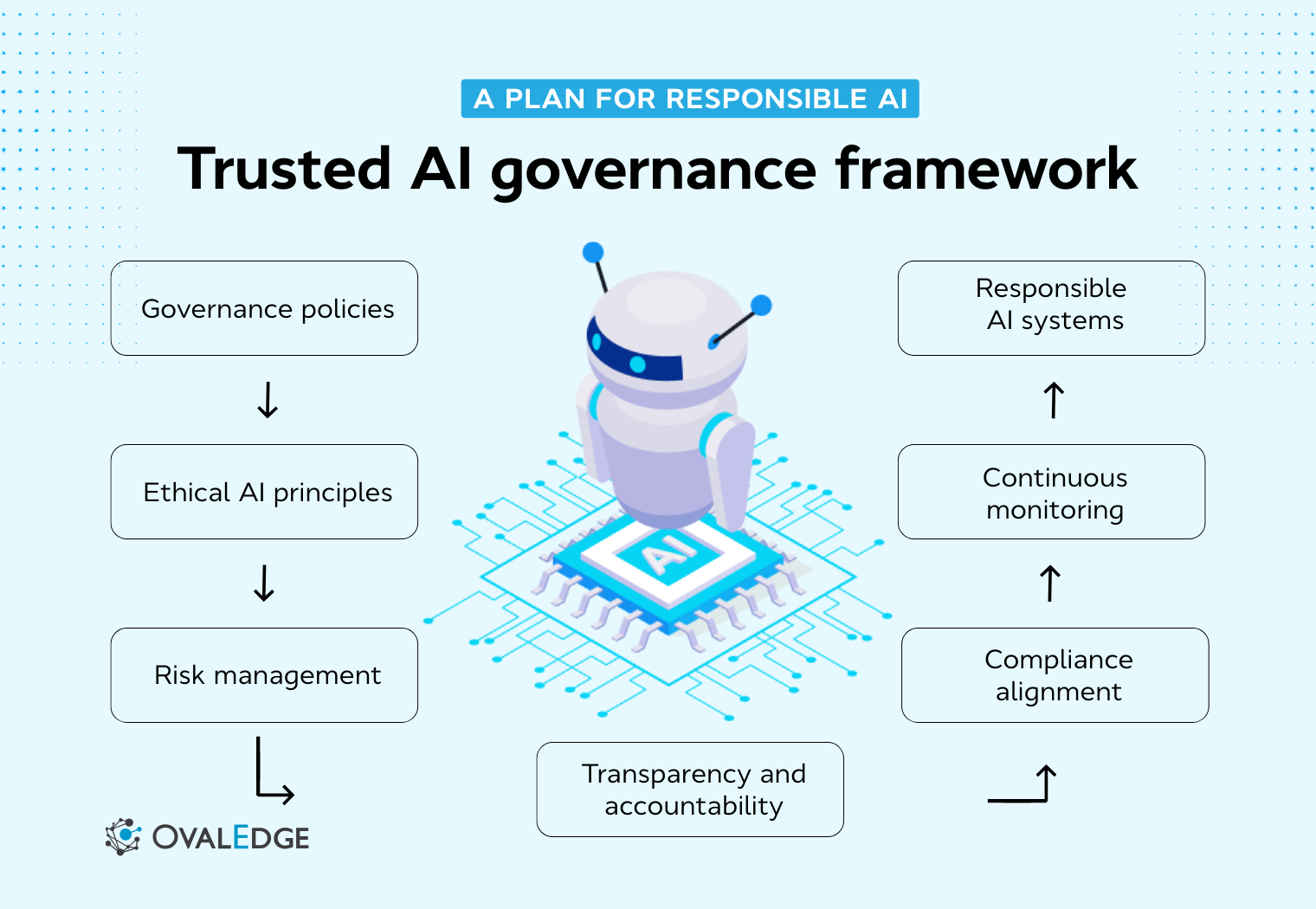

A trusted AI governance framework is the operating model that defines how AI systems are evaluated, approved, monitored, and improved over time. It connects policies, processes, roles, and controls into a unified structure that guides responsible AI adoption at scale.

Its purpose is to reduce risks such as bias, data exposure, and unreliable outputs, while enabling organizations to make consistent, accountable, and transparent decisions.

At a structural level, the framework connects key components:

|

Component |

Role in governance |

|

Policies |

Define acceptable use and risk thresholds |

|

Processes |

Standardize workflows across the AI lifecycle |

|

Roles and ownership |

Establish accountability across teams |

|

Controls |

Monitor performance, risk, and compliance |

|

Documentation |

Ensure traceability and audit readiness |

These components only deliver value when they operate as a connected system rather than isolated controls.

Why trust matters in enterprise AI

As AI becomes embedded across business functions, the ability to rely on its outputs becomes critical. When trust is unclear or inconsistent, organizations struggle to fully integrate AI into decision-making.

The impact of trust becomes clear when comparing weak versus strong governance environments:

|

When trust is weak |

When trust is strong |

|

Teams hesitate to rely on AI outputs |

Teams confidently use AI in decision-making |

|

Manual validation increases across workflows |

Processes remain efficient and streamlined |

|

Decision-making slows due to uncertainty |

Decisions become faster and more consistent |

|

Confidence in AI systems declines |

Adoption increases across business functions |

Trust directly influences how effectively AI can scale across the enterprise.

How governance supports responsible AI

Responsible AI governance ensures that defined principles are consistently applied across systems and teams. It creates a structured approach to managing risk, performance, and accountability throughout the AI lifecycle.

A well-defined governance model helps address critical operational questions:

-

Who reviews and approves high-risk AI use cases

-

What validation is required before deployment

-

How human oversight is integrated into decision-making

-

How performance, bias, and anomalies are monitored over time

|

For example, a credit risk model should pass defined validation thresholds before deployment and trigger alerts if performance drops below acceptable levels. This can be implemented through a lifecycle-based approach:

|

By embedding governance into each stage, AI systems remain reliable, accountable, and aligned with organizational standards as they evolve.

|

Related reading:

Ovaledge’s guide, Trusted AI: Why AI Governance is a Business-Critical Concern, explains how governance frameworks help organizations maintain control, reduce risk, and ensure responsible AI adoption at scale. |

The growing need for trusted AI governance in modern organizations

AI is no longer confined to controlled pilots or isolated experiments. It now operates across multiple business functions, including search, customer interactions, fraud detection, underwriting, and internal productivity. As this adoption expands, we are no longer managing a single model. We are managing an interconnected ecosystem of use cases, data pipelines, tools, and regulatory expectations.

This shift introduces a new layer of complexity.

|

Governance is no longer optional. It is the foundation for maintaining consistency, accountability, and control as AI scales across the enterprise. |

Risks of ungoverned AI

When governance is not structured or consistently applied, risks emerge quickly and often across multiple layers of the AI lifecycle. These risks are not always obvious at the start, but they compound as systems scale.

We typically see the following challenges:

-

Inconsistent or inaccurate outputs due to poor data quality or a lack of validation

-

Bias in model predictions that goes undetected without proper monitoring

-

Limited explainability makes it difficult to justify decisions

-

Data privacy and consent issues in training and inference stages

-

Lack of visibility into where prompts, outputs, and model artifacts are stored

These risks extend beyond model performance. They affect how data flows through systems, how decisions are made, and how organizations respond to issues when they arise.

As regulatory expectations evolve, governance must also align internal controls with external requirements. This includes mapping AI use cases to risk categories, maintaining audit-ready documentation, and ensuring compliance across jurisdictions.

Building accountability and transparency

Accountability in AI governance goes beyond assigning ownership. It requires clear visibility into how decisions are made and who is responsible at each stage.

To establish accountability, we need to ensure that every AI use case can answer key questions:

-

Who approved the use case and under what conditions

-

What data was used, and how it was validated

-

What testing and evaluation steps were performed

-

What risks were identified and accepted

-

How incidents are tracked and resolved

Transparency complements accountability but serves different stakeholders.

-

Regulators require traceability, documentation, and audit trails

-

Business users need clarity on how outputs are generated

-

Internal teams need visibility into lineage, dependencies, and performance

This level of transparency requires strong metadata management, lineage tracking, and documentation practices.

|

Platforms like OvalEdge support this by enabling organizations to trace data flows across systems, understand how inputs influence outputs, and maintain consistent visibility across the data ecosystem. For a deeper understanding, we can refer to our blog on Data Lineage Techniques and How to Implement Them (2026 Guide), where we break down how data flows, transformations, and dependencies contribute to effective governance. |

The role of compliance teams

Compliance teams have become central to AI governance as organizations scale their AI initiatives. Their role is no longer limited to reviewing policies. They actively shape how governance operates in practice.

They contribute by:

-

Classifying AI use cases based on risk and impact

-

Interpreting regulatory requirements across regions

-

Defining documentation and audit standards

-

Establishing escalation and approval workflows

Effective compliance teams work closely with data, engineering, and business teams to ensure governance is both practical and enforceable.

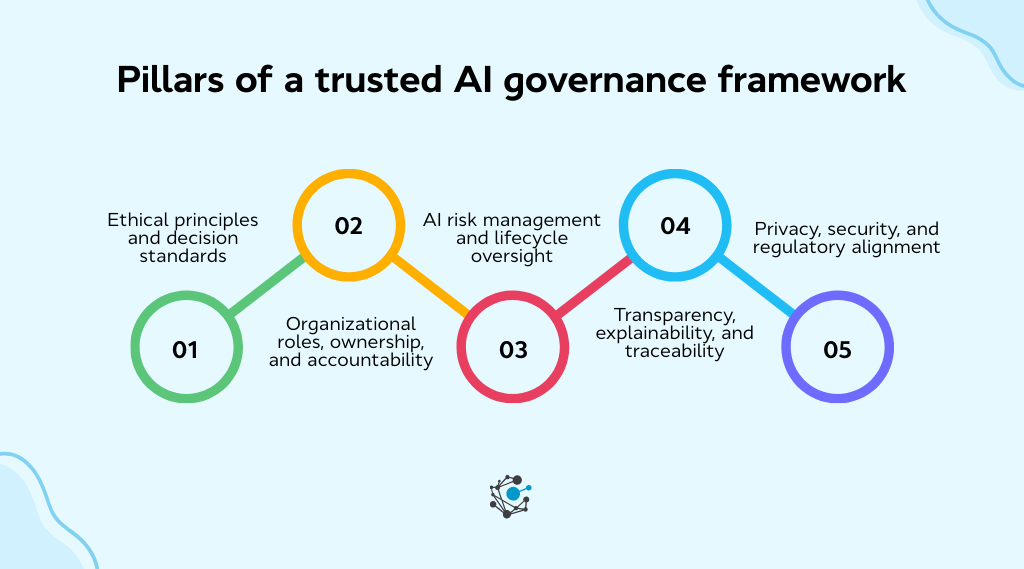

Pillars of a trusted AI governance framework

A trusted AI governance framework relies on a set of clearly defined pillars that guide how AI systems are designed, deployed, and managed. These pillars connect principles with execution, ensuring governance remains practical, scalable, and aligned with enterprise needs.

Ethical principles and decision standards

Every governance framework begins with clearly defined ethical boundaries that guide decision-making. These standards help teams determine what is acceptable, what requires escalation, and where risks must be controlled before deployment.

When these principles are documented and consistently applied, they reduce ambiguity across teams. This ensures that high-impact use cases follow the same criteria for fairness, accountability, and responsible use, regardless of where they are developed.

Organizational roles, ownership, and accountability

Governance becomes ineffective when ownership is unclear. A structured model defines who is responsible for decisions, validations, and ongoing monitoring across the AI lifecycle.

A clear role definition ensures that accountability remains visible. By assigning ownership across business, technical, and risk functions, organizations avoid gaps where decisions are made without proper oversight or responsibility.

AI risk management and lifecycle oversight

AI governance must extend across the entire lifecycle, not just the point of deployment. Risks can emerge at any stage, from data selection to model development, validation, and ongoing monitoring.

A lifecycle-based approach allows organizations to continuously assess and manage risks. It ensures governance evolves alongside systems, enabling teams to respond to changes, detect issues early, and maintain consistent oversight as AI scales.

Transparency, explainability, and traceability

Transparency ensures visibility into how AI systems operate and where they are applied. Explainability helps stakeholders understand how outputs are generated, while traceability records the data, decisions, and changes across the lifecycle.

Maintaining this level of traceability requires consistent visibility across data, models, and workflows. This is where governance platforms play a critical role by enabling lineage tracking, metadata visibility, and audit-ready documentation across enterprise systems.

|

Also read: OvalEdge’s perspective on building scalable governance models in The Only Data Governance Framework Template You’ll Ever Need, where we explain how organizations define controls and operationalize governance across enterprise environments. |

Privacy, security, and regulatory alignment

AI governance must align with broader privacy and security requirements to ensure data is protected and risks are minimized. This includes controlling access, managing sensitive data, and aligning with regulatory expectations across regions and industries.

When privacy and security are embedded into governance from the start, organizations can scale AI confidently. This ensures compliance is proactive, integrated, and consistently enforced across evolving AI systems.

Trusted AI governance frameworks

Most organizations do not build governance from scratch. They combine established frameworks, internal policies, and operational controls into a unified model. This approach helps create a trusted AI governance framework that is both practical to implement and scalable across enterprise environments.

1. NIST AI risk management framework

The NIST AI Risk Management Framework provides a structured, risk-based approach to managing AI systems across their lifecycle. It helps organizations connect governance with real-world implementation and continuous monitoring.

Core functions:

-

Govern: Establish policies, roles, and oversight mechanisms

-

Map: Identify AI use cases, context, and potential risks

-

Measure: Assess performance, bias, and reliability

-

Manage: Monitor systems and mitigate risks over time

This framework is widely used because it balances flexibility with clear operational guidance.

2. Global AI governance standards

Global standards help organizations align AI governance practices across regions while maintaining consistency in core principles. They provide a foundation for responsible AI that can adapt to different regulatory environments.

Key elements across global standards:

-

Ethical principles such as fairness, accountability, and transparency

-

Human oversight and responsible decision-making

-

Risk classification based on use case impact

-

Compliance alignment across jurisdictions

These standards help organizations distinguish between universal governance principles and region-specific regulatory requirements.

3. Enterprise governance models

Enterprise governance models translate frameworks into actionable structures within the organization. They define how governance operates across teams, systems, and workflows.

Typical governance layers:

-

Policy layer: Defines principles, acceptable use, and risk thresholds

-

Operating layer: Establishes workflows, ownership, and documentation

-

Monitoring layer: Tracks performance, incidents, and compliance over time

This layered approach ensures governance is embedded into daily operations rather than treated as a one-time activity.

4. Combining multiple frameworks

In practice, organizations rarely rely on a single framework. Instead, they combine elements from different frameworks to create a model that fits their needs.

How enterprises typically combine frameworks:

-

Use risk-based frameworks to manage AI lifecycle risks

-

Apply global principles to ensure ethical and responsible use

-

Align with regulatory requirements for compliance readiness

-

Integrate internal policies to reflect business-specific needs

This combined approach enables organizations to build a flexible yet structured AI trust framework that supports both governance and innovation.

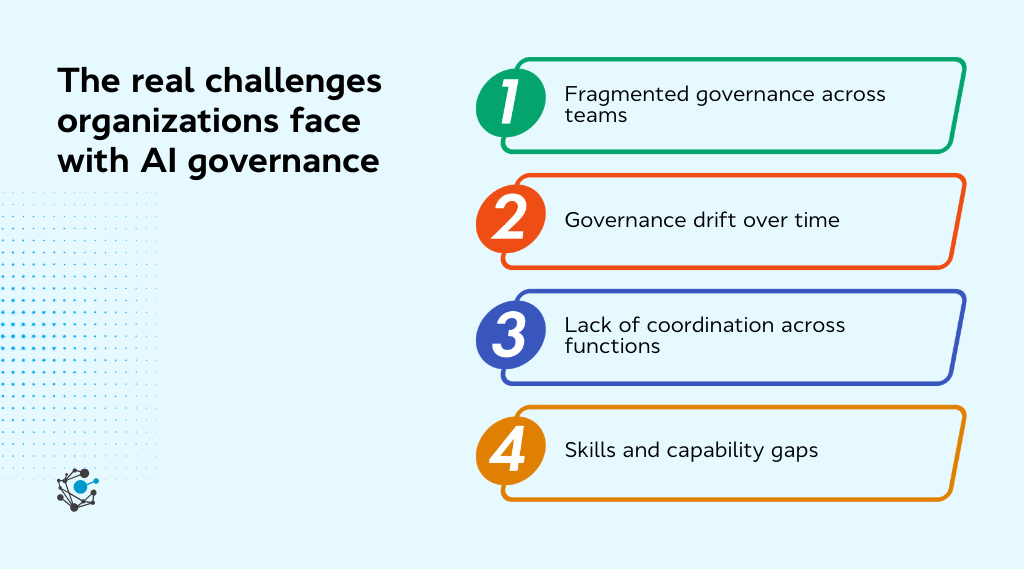

The real challenges organizations face with AI governance

The challenge with AI governance is not defining frameworks. It ensures they are consistently applied across teams, systems, and evolving use cases without slowing down execution.

As organizations scale AI, gaps begin to appear between defined governance and how it actually operates in practice.

-

Fragmented governance across teams: AI initiatives often begin in silos, where different teams follow their own processes, documentation standards, and approval workflows. This leads to inconsistent governance practices, limited visibility across use cases, and difficulty enforcing a unified framework at the enterprise level.

-

Governance drift over time: Governance is typically strongest at the point of deployment but weakens as systems evolve. Changes in models, data sources, and prompts are not always revalidated, creating a gap between defined policies and actual implementation. Without continuous monitoring, governance becomes outdated and ineffective.

-

Lack of coordination across functions: AI governance requires alignment across compliance, legal, data, engineering, and business teams. Each function often operates with different priorities and terminology, which slows decision-making and makes it difficult to translate governance policies into consistent, operational actions.

-

Skills and capability gaps: Effective governance requires a combination of technical, regulatory, and operational expertise. Many organizations lack skills in areas such as policy interpretation, model validation, data controls, and change management.

Addressing these challenges requires more than defined policies. Organizations need a governance model that is continuously enforced, clearly owned, and supported by systems that provide visibility, alignment, and control across the entire AI lifecycle.

Scaling trusted AI governance with modern governance platforms

As organizations scale beyond a few AI use cases, manual processes and disconnected approvals become difficult to manage. Governance platforms help centralize use-case tracking, policies, approvals, monitoring, and documentation, making governance more consistent and repeatable across the enterprise.

Unified oversight across AI and data

AI governance is most effective when it is tightly integrated with data governance. Most risks in AI originate from upstream data, including data quality issues, access controls, transformations, and usage across systems.

A unified approach connects these elements, allowing teams to view AI systems within the full data lifecycle rather than as isolated models. Capabilities such as data cataloging and metadata management help create this unified view by linking datasets, business context, and governance policies across systems.

Traceability for audits and compliance

Effective governance depends on the ability to trace decisions and data across the AI lifecycle. Organizations need clear visibility into how data flows, how models are built, and how outputs are generated.

Data lineage capabilities play a key role here by enabling teams to track data movement, transformations, and dependencies across pipelines and systems. This ensures audit readiness, strengthens accountability, and provides a clear record for compliance and internal reviews.

Supporting enterprise AI governance

Scaling AI governance requires connecting multiple governance capabilities into a single, operational system. This includes metadata management, lineage tracking, data quality monitoring, access controls, and policy enforcement.

|

Platforms like OvalEdge enable this by bringing together key governance capabilities into a unified system:

|

By integrating these capabilities, organizations can move from fragmented governance processes to a unified model that supports visibility, control, and scalability across enterprise AI environments.

How to measure AI governance effectiveness?

Defining a governance framework is only the first step. The real test is whether governance is consistently enforced and delivers reliable outcomes across AI systems.

Effective measurement focuses on observable signals that show how governance operates in practice.

Organizations can assess this across four key areas:

-

Policy adherence: Track how consistently AI use cases follow required approval, validation, and documentation workflows before deployment. Gaps here often indicate breakdowns in governance enforcement.

-

Model performance: Monitor whether models remain within defined thresholds for accuracy, stability, and drift, and how quickly issues are detected and resolved.

-

Risk management: Evaluate whether high-risk use cases have clear controls in place and how effectively incidents, exceptions, and escalations are handled.

-

Traceability: Ensure that data, decisions, and model changes are fully documented and can be reconstructed for audits, reviews, or investigations.

Regular monitoring of these indicators helps identify weak points, strengthen controls, and continuously improve governance as AI systems scale.

Conclusion

A trusted AI governance framework turns AI initiatives into controlled, scalable systems that organizations can rely on. The most effective models combine clear principles, defined ownership, lifecycle risk management, and traceability, while staying closely connected to data and real workflows.

The next step is to assess where gaps exist. Which AI use cases carry the highest risk? Where does ownership break down? Do we have visibility into data, models, and decisions across the lifecycle? These answers define where governance needs to evolve.

OvalEdge helps operationalize this by unifying metadata, lineage, data quality, and governance controls into a single platform. askEdgi extends this by enabling users to interact with enterprise data through natural language while enforcing governance policies, maintaining traceability, and ensuring secure, compliant access to insights.

To move forward, book a demo with OvalEdge and see how to scale trusted AI governance across your enterprise.

FAQs

1. How does a trusted AI governance framework differ from AI model governance?

A trusted AI governance framework oversees the entire AI ecosystem including policies, risk controls, data oversight, and accountability structures. AI model governance focuses specifically on managing model development, validation, monitoring, and performance within that broader governance framework.

2. Who is responsible for implementing AI governance in an organization?

AI governance typically involves multiple stakeholders. Leadership sets governance priorities, AI and data teams manage technical controls, risk teams assess potential impacts, and compliance teams ensure alignment with regulatory requirements and internal policies.

3. What industries benefit most from trusted AI governance frameworks?

Industries that rely on automated decision-making benefit significantly. Financial services, healthcare, insurance, retail, and public sector organizations use governance frameworks to manage risk, maintain transparency, and ensure ethical use of AI systems.

4. How can organizations measure the maturity of their AI governance program?

Organizations assess AI governance maturity by evaluating policy coverage, risk management practices, model monitoring processes, documentation standards, and regulatory readiness. Maturity models help identify gaps and guide improvements in governance structures and oversight mechanisms.

5. How does data governance support trusted AI governance?

Data governance provides the foundation for trustworthy AI by ensuring data quality, lineage, and access control. Reliable data management helps organizations maintain transparency in AI models and supports consistent monitoring of model performance and outcomes.

6. What role does documentation play in AI governance?

Documentation helps organizations maintain transparency and accountability in AI systems. It records model design decisions, training data sources, validation processes, and risk assessments, which support internal reviews, regulatory audits, and continuous improvement of AI governance practices.

Deep-dive whitepapers on modern data governance and agentic analytics

OvalEdge Recognized as a Leader in Data Governance Solutions

.png?width=1081&height=173&name=Forrester%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

.png?width=1081&height=241&name=KC%20-%20Logo%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

Gartner, Magic Quadrant for Data and Analytics Governance Platforms, January 2025

Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

GARTNER and MAGIC QUADRANT are registered trademarks of Gartner, Inc. and/or its affiliates in the U.S. and internationally and are used herein with permission. All rights reserved.