Table of Contents

PII Data Discovery: How to Find, Classify, and Protect Sensitive Personal Data

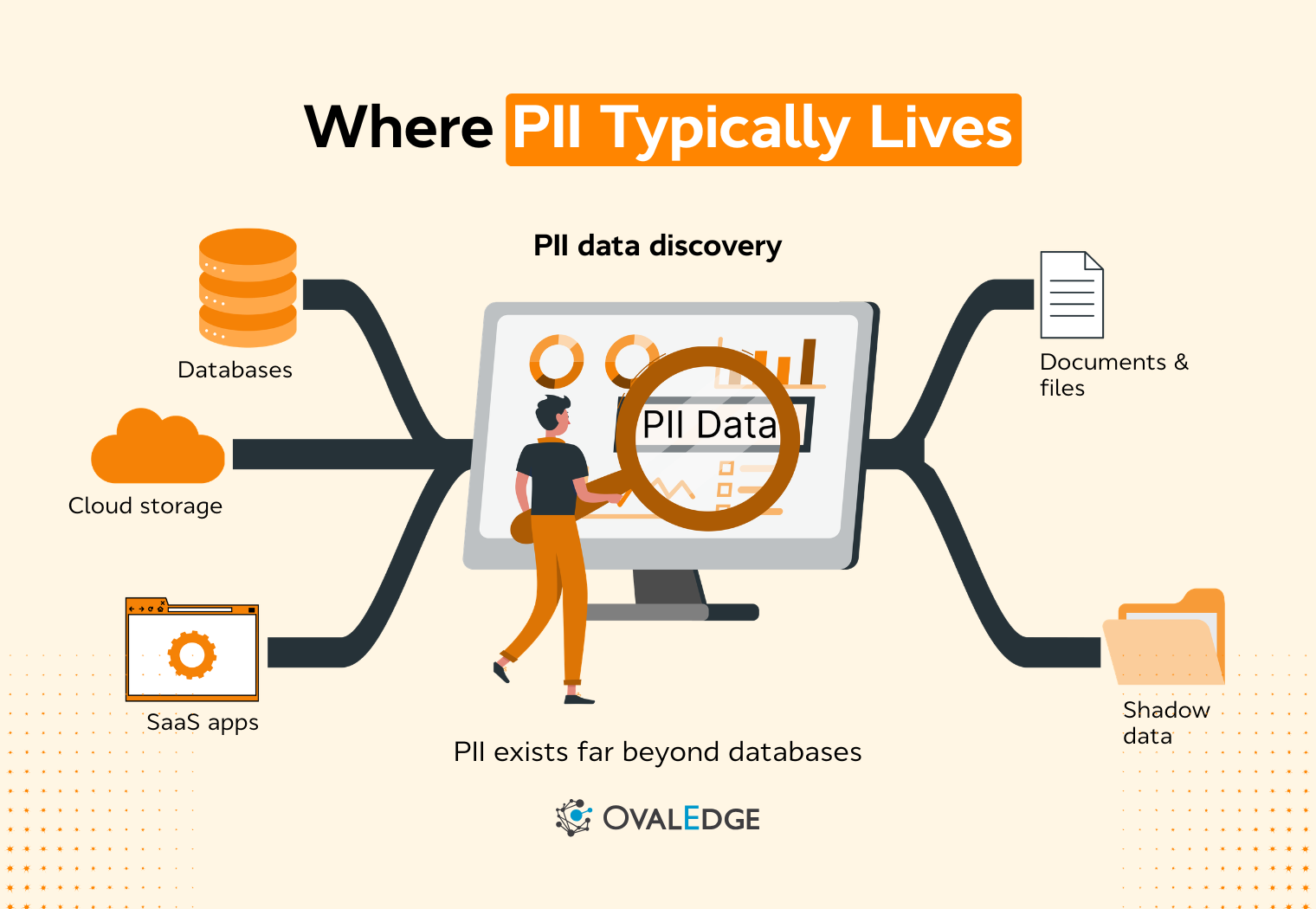

Personal data rarely stays where it was first collected. It spreads across cloud warehouses, SaaS apps, logs, and shared files, quietly increasing risk. PII data discovery brings clarity by continuously identifying, classifying, and tracking sensitive information across structured and unstructured environments. With accurate detection and governance integration, organizations reduce blind spots, strengthen compliance, and respond to audits and incidents with confidence.

Personal data no longer stays confined to a single database. It moves across cloud warehouses, SaaS platforms, analytics tools, shared drives, and collaboration systems, often without clear oversight. As environments grow more distributed, sensitive information spreads faster than governance processes can track it.

Security incidents, regulatory audits, and data subject requests expose the same weakness: organizations struggle to answer a basic question: where does personal data actually live? Without structured visibility, response efforts slow down, compliance risks increase, and controls are applied inconsistently.

PII data discovery addresses this gap by turning scattered data landscapes into searchable, classifiable, and defensible inventories. It shifts discovery from a periodic exercise into a continuous capability that supports security, privacy, and governance together.

In this blog, we will discuss how PII data discovery works, why it matters for security and compliance teams, the types of personal data organizations must identify, and what features define an effective discovery platform.

How does PII data discovery work?

Modern PII data discovery approaches combine pattern-based detection with machine learning and context rules so teams can find sensitive data in both structured systems like databases and unstructured content like files and messages.

1. Automatically scanning across real enterprise data sprawl

Most organizations do not have one “data warehouse” anymore. They have a mix of cloud warehouses, object storage, SaaS applications, collaboration tools, logs, and analytics workspaces.

A spreadsheet can list systems, but it cannot keep up with new tables, new columns, new exports, and new files created every day.

A practical way to think about scanning is by source type:

-

Structured sources like relational databases, data warehouses, and spreadsheets: discovery reads schemas, column names, and samples of values to determine whether a field is likely to contain PII.

-

Unstructured sources like documents, PDFs, emails, chat exports, tickets, and logs: discovery analyzes text content and sometimes attachments, which is where sensitive data often hides in plain sight.

Good sensitive data discovery programs prioritize breadth first. They start by connecting the biggest risk surfaces, such as cloud storage and shared collaboration systems, then expand coverage to data platforms and long-tail repositories.

2. Identifying PII using multiple detection methods

The simplest version of PII discovery software uses pattern matching, for example detecting a credit card number format or a national ID format. It is useful, but it is not sufficient on its own.

Real environments contain identifiers that look like PII but are not, and they contain PII that does not match obvious patterns.

Top approaches layer several techniques:

-

Pattern matching for known formats such as email addresses, payment cards, national IDs

-

Keyword and dictionary matching for business-specific labels such as “patient_id” or “tax_id”

-

Metadata and location signals such as file type, folder, ownership, or database schema context

-

Machine learning classifiers that use surrounding text and field context to decide what the data actually represents

This layered model matters because many high-risk cases are subtle. A column called “id” can be harmless in one system and highly sensitive in another.

A “number” field might store phone numbers, device identifiers, or telemetry codes. The detection method needs to understand context, not just format.

3. Applying context to reduce false positives and make results usable

False positives are one of the biggest reasons PII data discovery programs stall. When teams get flooded with detections that require manual review, they stop trusting the system.

Strong PII data discovery platforms address this in two ways:

-

Confidence scoring to express how certain the system is that something is PII, so teams can prioritize review and remediation

-

Context-aware validation that looks at neighboring fields, sample values, and usage signals, not just a single match

Context also matters because sensitivity is not only about the data element itself. Evaluating the context of use and how combinations of fields can increase sensitivity, for example, when seemingly harmless attributes become identified when combined.

4. Classifying discovered data into categories, teams can govern

After identification, PII data discovery becomes operational only when results are classified. Classification answers questions like:

-

What type of PII is this, for example, contact data, government ID, financial, or health

-

How sensitive is it based on policy, regulation, and context

- Where exactly does it live, and who owns the system or dataset

This is where PII data discovery starts to support governance outcomes. Maintaining an inventory of systems and applications that process PII because it supports mapping, privacy notices, and limiting processing when it is not needed.

5. Monitoring changes continuously

One-time scans are rarely enough. Data moves. Tables change. Teams add new fields. Files get exported and shared. This is why modern PII data discovery is described as continuous rather than periodic, especially in cloud environments.

Continuous discovery focuses on change detection:

-

New datasets or new buckets created

-

New columns added to existing tables

-

New files uploaded to shared repositories

-

New integrations that replicate data into additional systems

This matters for both security and compliance. Security teams need current visibility so they can reduce exposure. Compliance teams need defensible visibility so they can show they understand where personal data resides when asked.

6. Connecting discovery to compliance accountability without turning it into legal work

Regulations like GDPR, CCPA, and HIPAA increase the cost of not knowing where personal data is. The key practical point is not legal interpretation. It is auditability. If you cannot demonstrate that you know where PII lives, it becomes harder to justify that controls are appropriate or consistently applied.

Well-designed PII discovery software helps by producing artifacts teams can use, such as inventories and reports, which align with the broader expectation of maintaining an understanding of where PII is processed.

Why PII data discovery is critical for security and compliance teams

Security and compliance leaders consistently face the same structural problem: sensitive data spreads faster than oversight mechanisms. As organizations adopt cloud platforms, SaaS applications, and analytics pipelines, personal data moves across systems, environments, and teams.

Without reliable PII data discovery, that movement happens largely unchecked. PII data discovery has evolved from a helpful inventory exercise into a strategic control that underpins data security, privacy compliance, and risk management.

1. Blind spots increase breach impact

When organizations do not know where PII resides, incident response becomes slower and more expensive. Breaches are rarely isolated, one-off events.

According to a 2022 Forrester Report on Data Breach Benchmarks, organizations experienced an average of four breaches over a single time period in 2022, underscoring how recurrent data exposure has become.

In real-world breach scenarios, one of the first questions regulators and legal teams ask is: What data was exposed? If there is no accurate inventory of where personal data lives, teams must manually investigate systems, logs, backups, and third-party integrations. That delays notification decisions and increases uncertainty in regulatory reporting.

Unknown PII also expands the scope of impact.

|

For example, if sensitive data has been copied into analytics sandboxes, shared drives, or test environments without governance oversight, an incident can affect far more records than expected. |

PII data discovery reduces this uncertainty by continuously identifying where sensitive data is stored, replicated, and accessed.

2. Regulatory expectations now require demonstrable awareness

Regulatory frameworks increasingly focus on accountability and demonstrable governance. GDPR, CCPA, HIPAA, and newer state-level privacy laws do not simply require breach response. They require organizations to understand and manage personal data throughout its lifecycle.

Regulators expect organizations to maintain data inventories and mapping records that reflect where personal data is processed. Data mapping is not theoretical. It depends on reliable, sensitive data discovery.

|

For example, fulfilling a data subject access request requires locating all personal data tied to an individual across multiple systems. Without automated PII data discovery, teams rely on manual outreach to system owners, which introduces inconsistency and delays. |

In complex environments, this can lead to incomplete disclosures, which increases compliance risk.

Similarly, privacy impact assessments require understanding what categories of personal data are collected, how they are used, and where they are stored. Automated PII detection supports this by continuously classifying data against defined categories such as contact information, financial identifiers, or health-related attributes.

Compliance teams also need defensible evidence. During audits, it is not enough to say that policies exist. Teams must demonstrate that systems are scanned, that personal data is classified, and that controls are applied consistently.

PII data discovery platforms provide logs, reports, and inventory records that support this level of accountability.

3. Operational friction during audits and investigations

Beyond breach response and regulatory mandates, day-to-day operations suffer without structured PII data discovery.

Common pain points include:

-

Difficulty identifying data owners for sensitive datasets

-

Delays in answering internal risk assessments

-

Manual reviews of shared drives and data exports

-

Inconsistent tagging of sensitive fields across systems

When PII detection is automated and integrated into data governance workflows, these tasks become repeatable processes instead of ad hoc investigations.

Security teams can quickly identify which systems store social security numbers or health data. Governance teams can track classification coverage across domains. Compliance teams can generate reports aligned with regulatory categories.

4. Manual discovery approaches do not scale in cloud environments

In modern data ecosystems, new datasets are created daily. Data engineers spin up temporary environments. Business users export reports. AI and analytics teams generate derived features that may still contain personal attributes.

Manual inventory processes cannot detect these changes in real time. By the time an annual audit occurs, the data landscape may look completely different.

According to the Cost of Data Breach, 2024 Report by IBM, over 40% of data breaches now involve data spread across multiple public cloud and on-prem systems, highlighting how exposure often spans distributed environments rather than a single repository.

Automated PII data discovery addresses this scale challenge by continuously scanning for new fields, schema changes, and new repositories. This ensures that visibility evolves with the data environment.

5. PII data discovery as a foundation for broader security controls

PII data discovery directly supports other security controls:

-

Data loss prevention policies rely on accurate classification of sensitive data.

-

Access governance programs need to know which datasets contain high-risk PII to enforce stricter permissions.

-

Encryption and masking strategies depend on identifying which fields require protection.

-

Insider threat monitoring must prioritize repositories that contain regulated personal data.

Without reliable PII detection, these controls are applied inconsistently or over-applied, which can disrupt business processes.

By contrast, when organizations implement structured, automated PII data discovery, they gain a risk-aware foundation.

Types of PII data organizations need to discover

Not all personal data carries the same risk profile, and not all PII is obvious at first glance.

Effective PII data discovery must account for multiple categories of identifiable information, including data that is clearly identifying and data that becomes identifying when combined with other attributes.

Organizations that focus only on obvious identifiers often miss more subtle exposures. Comprehensive sensitive data discovery requires understanding how personal data appears across systems, workflows, and business processes.

1. Direct identifiers

Direct identifiers independently identify an individual without requiring additional context. These are the most visible and most clearly regulated forms of PII.

Common examples include:

-

Full names

-

Email addresses

-

Phone numbers

-

Social security numbers

-

Passport or driver’s license numbers

-

Customer or employee account numbers

These elements are typically found in structured systems such as CRM platforms, HR systems, payroll databases, and billing applications.

However, they also appear in less controlled environments, including exported CSV files, support tickets, customer emails, and shared document repositories.

The primary risk with direct identifiers is that their exposure often triggers mandatory breach notification obligations under regulations like GDPR, CCPA, and various state-level privacy laws.

When these identifiers are compromised, organizations must assess scope quickly and accurately. Without reliable PII data discovery, determining how widely these identifiers are stored becomes a manual and error-prone process.

Another practical challenge is duplication. Direct identifiers are frequently replicated across systems for analytics, reporting, or integration purposes. A customer’s email address may appear in marketing tools, sales dashboards, and support logs.

Effective automated PII detection must identify these replicas so teams can evaluate exposure across the entire data lifecycle, not just in the system of origin.

2. Quasi-identifiers and sensitive attributes

Quasi-identifiers do not independently identify a person but can do so when combined with other data points. These are often underestimated in PII discovery programs.

Examples include:

-

Date of birth

-

ZIP code or postal code

-

IP address

-

Device identifiers

-

Demographic attributes such as gender or job title

-

Behavioral patterns such as browsing history or purchase activity

In many data environments, these attributes are used for analytics and personalization. Teams may treat them as operational data rather than personal data. However, seemingly generic attributes can uniquely identify individuals, especially in smaller populations or niche datasets.

|

For example, a dataset containing birth date, ZIP code, and gender may not include names, yet it can still be used to re-identify individuals when cross-referenced with public records or other internal systems. |

This is why PII data discovery cannot rely solely on column names or obvious patterns. It must analyze combinations of attributes and contextual relationships.

Sensitive attributes add another layer of risk because they often fall under heightened regulatory scrutiny.

These include:

-

Financial transaction history

-

Health records or medical information

-

Biometric data such as fingerprints or facial recognition templates

-

Precise location tracking data

Such data elements are often subject to stricter protection requirements under regulations like HIPAA or GDPR. Exposure of health or financial data can lead to significant reputational harm, even if no direct identifiers are disclosed.

A common operational issue arises when sensitive attributes are extracted for analytics purposes.

|

For example, healthcare organizations may create de-identified datasets for research. If identifiers are not fully removed or if re-identification is possible through quasi-identifiers, the dataset may still carry regulatory risk. Robust PII discovery helps detect these residual risks before they escalate. |

3. Regulated data under GDPR, CCPA, and HIPAA

Different privacy regulations define personal data in distinct ways, but they share a common requirement: organizations must know what personal data they collect, where it resides, how it is used, and who can access it. This is where PII data discovery becomes essential.

Under GDPR, personal data is defined broadly as any information relating to an identified or identifiable natural person.

This includes obvious identifiers such as names and ID numbers, but also indirect identifiers like online identifiers, location data, and factors specific to physical, economic, or social identity.

In practice, this means that even IP addresses, cookie identifiers, or device IDs stored in analytics systems can fall within scope. For organizations operating in the EU or serving EU residents, PII data discovery must extend beyond core business systems and into marketing platforms, web logs, and customer engagement tools.

CCPA expands the concept of personal information further by including household-level identifiers and inferences drawn from other data.

|

For example, if a company profiles a household based on purchasing behavior or connected devices, that derived data can still qualify as regulated personal information. |

This creates a practical challenge for sensitive data discovery. Detection mechanisms must identify not only raw identifiers but also data elements that describe or infer characteristics about individuals or households.

HIPAA introduces a different layer of complexity by defining protected health information as health-related data linked to an individual. In healthcare and related sectors, this includes medical records, treatment information, billing data, and even appointment schedules when tied to patient identifiers.

PII data discovery in this context must account for structured clinical systems, but also scanned documents, physician notes, and communication records that may contain embedded health information.

Across all three frameworks, the regulatory theme is accountability. Regulators expect organizations to maintain records of processing activities, fulfill subject access requests, and apply appropriate safeguards to sensitive data.

These obligations are not feasible without accurate, continuously updated visibility into where regulated data resides.

For PII data discovery, the practical takeaway is clear. Detection systems must do more than identify patterns. They must map detected attributes to regulatory categories so organizations can understand which laws apply to which datasets.

|

For example:

|

Operational awareness means that once PII is discovered, it is classified in a way that aligns with applicable compliance frameworks.

This allows security teams to prioritize controls, privacy teams to manage consent and access requests, and governance teams to generate defensible documentation during audits.

Organizations searching for PII data discovery solutions often ask whether detection alone is sufficient for compliance. The answer in practice is no. Discovery must be connected to classification, reporting, and access governance to meet regulatory expectations.

When sensitive data discovery is aligned with GDPR, CCPA, and HIPAA definitions, it becomes a foundation for ongoing data privacy compliance rather than a one-time audit exercise.

PII data discovery vs traditional data discovery

PII data discovery and traditional data discovery both aim to create visibility across the data landscape. The difference becomes clear when risk enters the equation.

One focuses on cataloging what exists. The other focuses on identifying what is sensitive, regulated, and potentially exposed. Understanding this distinction is essential for modern security and compliance strategies.

|

Dimension |

Traditional data discovery |

PII data discovery |

|

Primary focus |

Identifying datasets and metadata |

Identifying personally identifiable and sensitive data |

|

Sensitivity awareness |

Manual tagging |

Built-in automated PII detection |

|

Discovery approach |

Metadata scans |

Pattern matching plus ML classification |

|

Context understanding |

Field-level visibility |

Context-aware cross-field analysis |

|

Unstructured data coverage |

Limited |

Native support for files, documents, logs |

|

False-positive handling |

Manual review |

Confidence scoring and validation workflows |

|

Automation level |

Periodic scans |

Continuous monitoring |

|

Compliance readiness |

Indirect |

Direct support for GDPR, CCPA, HIPAA |

|

Operational outcome |

Inventory visibility |

Risk-aware visibility tied to controls |

Key features to evaluate in a PII data discovery platform

Not all PII data discovery tools are built for enterprise complexity. Some focus narrowly on pattern matching in databases. Others support broader sensitive data discovery across cloud and SaaS environments.

Evaluating features carefully helps organizations avoid fragmented tooling, redundant scans, and manual reconciliation between systems.

A mature PII data discovery platform should support automation, accuracy, scalability, and integration with governance and security controls. Below are the features that matter most when assessing enterprise readiness.

1. Automated and continuous PII data discovery

In modern data environments, change is constant. New tables are created in data warehouses. Developers add columns during application updates. Business teams export datasets for analysis. SaaS platforms sync records across systems. Static or one-time scans cannot keep up with this pace.

Scheduled scans are a starting point, but continuous monitoring is more resilient. Continuous PII data discovery means the system can detect:

-

Newly created data sources

-

Schema changes in existing databases

-

New columns that may store personal data

-

New files uploaded to shared storage

-

New integrations that replicate PII into additional platforms

Automatic detection of new data sources is especially important in cloud-native environments. Teams frequently provision new storage buckets, containers, or analytics workspaces without centralized oversight.

If the discovery platform does not detect these automatically, blind spots persist until the next audit cycle.

Monitoring schema changes is another practical requirement. A developer might add a “national_id” field to a customer table or introduce a free-text notes column that begins to store sensitive data.

Continuous PII detection identifies these changes early, allowing governance teams to apply classification and controls before exposure expands.

Alerts for newly introduced sensitive fields provide operational value. Instead of reviewing static reports, security and privacy teams can respond proactively when high-risk PII appears in unexpected systems.

Support for cloud-native environments is also critical. Many organizations operate across multiple cloud providers, hybrid environments, and SaaS platforms.

A PII data discovery platform should connect to structured databases, object storage, collaboration tools, and application logs without requiring custom integration work for each source.

2. Accuracy, confidence scoring, and false-positive control

Accuracy determines whether a PII data discovery program is trusted or ignored. If a tool flags thousands of false positives, teams quickly lose confidence and stop acting on results. Conversely, false negatives create hidden risk by missing actual sensitive data.

High-quality PII discovery software balances detection sensitivity with precision. Key mechanisms include:

-

Confidence scoring that indicates the likelihood a detected field truly contains PII

-

Contextual validation that examines surrounding fields, data patterns, and usage context

-

Field correlation that evaluates combinations of attributes rather than isolated matches

-

Custom rule tuning to align detection with organizational policies and industry requirements

Custom rule tuning is especially important in specialized industries. Healthcare organizations may need tailored detection rules for clinical codes. Financial institutions may need rules aligned with payment card and account data.

A platform that supports rule customization reduces noise and improves alignment with real risk exposure.

Accuracy also affects workflow efficiency. When discovery results are reliable, teams can automate downstream actions such as classification tagging, masking policies, or access reviews.

When results are inconsistent, every finding requires manual validation, which undermines scalability.

Trust is the central issue. When security, privacy, and governance teams trust the outputs of automated PII detection, they use it as a foundation for risk assessments, compliance reporting, and control enforcement.

When outputs are noisy, discovery becomes an unused reporting layer rather than an operational control.

In evaluating the best PII data discovery tools, organizations should test detection accuracy against real datasets, examine false-positive rates, and confirm that the platform provides transparency into how confidence scores are calculated.

Precision and clarity are what turn automated data scanning into a dependable part of enterprise data security and privacy compliance.

3. Context-aware discovery across structured and unstructured data

Sensitive data rarely lives only in relational tables with clearly labeled columns. In many organizations, high-risk personal information appears in less structured and less governed environments.

Modern enterprise ecosystems include:

-

PDFs stored in shared drives

-

Email threads between support teams and customers

-

Application and system logs

-

Slack or Teams message exports

-

Contract repositories and document management systems

These sources often contain free-text fields where employees paste screenshots, include identifiers in comments, or attach documents containing personal data. Traditional data discovery tools that focus only on structured databases miss this layer of exposure.

Context-aware PII data discovery addresses this gap by combining pattern recognition with machine learning classification.

Pattern recognition identifies recognizable formats such as email addresses or national ID numbers. Machine learning models analyze surrounding language and metadata to determine whether the detected string is truly personal data and in what context it is being used.

Free-text analysis is particularly important as AI-generated content increases. Analytics teams may generate summaries that include inferred characteristics.

Customer service chat logs may contain detailed descriptions of personal circumstances. These scenarios require sensitive data discovery tools that understand semantic context, not just data formats.

For organizations evaluating PII data discovery solutions, unstructured data coverage should be a core requirement. If a platform cannot scan documents, communications, and file repositories, it leaves a significant blind spot in enterprise risk management.

4. Integration with data classification, masking, and access governance

Integration with data classification frameworks ensures that detected PII is tagged according to sensitivity policies. This enables consistent labeling across systems and supports reporting aligned with regulatory requirements.

When classification is automated, teams avoid the inconsistency of manual tagging.

Masking and encryption policies depend on accurate discovery. If a system identifies that a database column contains social security numbers, it should trigger masking in non-production environments or encryption at rest and in transit.

Without integration, teams must manually translate discovery findings into configuration changes, which increases the risk of delay or oversight.

Role-based access controls are another critical connection point. Once sensitive data is identified, organizations need to verify who has access and whether that access aligns with policy.

PII data discovery integrated with access governance allows teams to cross-reference detected sensitive datasets with user entitlements. This supports zero trust principles by ensuring that only authorized roles can access regulated personal data.

Lineage tracking adds additional value. Data often flows from operational systems into analytics platforms, reporting dashboards, and external integrations. When discovery results are linked to lineage metadata, teams can trace how personal data moves across environments.

This visibility helps during incident response and compliance reporting because it clarifies downstream impact.

How to measure the effectiveness of PII data discovery

Many organizations assume that running frequent scans means their PII data discovery program is working. In reality, scan frequency and data volume tell you very little about risk reduction.

Effectiveness must be measured in terms of visibility, accuracy, response time, and control enforcement.

The goal of PII data discovery is to maintain a defensible, continuously updated understanding of where sensitive data lives and how it is governed.

1. Percentage of data assets scanned for PII

Coverage is the foundation of effectiveness. If large portions of your data estate are not scanned, your risk assessment is incomplete.

This metric should answer practical questions such as:

-

Are all production databases connected to the discovery platform?

-

Are non-production environments included?

-

Are cloud storage buckets, SaaS platforms, and collaboration tools covered?

-

Are structured and unstructured sources both in scope?

Partial coverage creates a false sense of security. Effective programs track coverage across business units, domains, and environments to identify blind spots.

Coverage also needs to evolve. As new systems are onboarded, the percentage of assets scanned should remain high. A drop in coverage may indicate that new repositories are being created without governance alignment.

2. False-positive and false-negative rates

Accuracy is a critical performance indicator for automated PII detection. A high false-positive rate leads to alert fatigue, wasted review time, and declining trust in the platform. A high false-negative rate creates hidden exposure that undermines compliance and security efforts.

Organizations should measure:

-

How often flagged fields are confirmed as true PII

-

How often real PII is discovered outside of automated scans

- Whether detection quality improves as rules and models are tuned

False negatives are especially dangerous because they are harder to detect. One common approach is to perform targeted validation exercises.

|

For example, governance teams may manually review a sample dataset to confirm whether automated sensitive data discovery correctly identified all relevant fields. |

Tracking these rates over time helps organizations refine rule sets, adjust classification logic, and improve contextual detection models.

3. Time to detect newly introduced PII

Time to detect newly introduced PII measures how quickly the discovery platform identifies:

-

New columns added to existing tables

-

New datasets containing personal data

-

New files uploaded to shared repositories

-

New integrations replicating PII across systems

If detection occurs only during quarterly scans, exposure can persist for months. Continuous or near real-time monitoring significantly reduces this window.

This metric directly supports risk reduction because earlier detection enables faster application of masking, encryption, and access controls.

In environments where development teams frequently deploy schema updates, this metric becomes especially important. It ensures governance keeps pace with engineering changes.

4. Coverage across structured and unstructured sources

Many organizations initially focus on databases because they are easier to scan. However, sensitive data often appears in less structured formats such as PDFs, support emails, chat transcripts, and log files.

Measuring coverage across both structured and unstructured sources provides a more accurate view of enterprise exposure. This includes verifying that:

-

Document repositories are scanned

-

Communication archives are included where policy allows

-

Log management systems are reviewed for embedded identifiers

-

AI-generated reports and analytics outputs are assessed for personal data

Without this broader coverage, PII data discovery remains incomplete. Unstructured environments often contain the most unpredictable and high-risk data exposures.

5. Reduction in DSAR and audit response time

Operational efficiency is another meaningful measure of effectiveness. When PII data discovery is mature and integrated with governance workflows, response times for data subject access requests and audits should decrease.

Teams should evaluate:

-

How quickly can they identify all systems containing an individual’s data

-

How easily they can generate reports that list categories of personal data

-

Whether they can demonstrate classification and access controls during audits

Organizations with automated discovery and centralized governance reduce compliance response effort compared to those relying on manual mapping. This reinforces that automation and integration are not just technical improvements. They have measurable operational impact.

If audit preparation still requires weeks of manual inventory work, the discovery program may not be delivering full value.

How OvalEdge does it

Many organizations treat PII data discovery as a one-time scanning activity. The outcome is often a static list of findings that requires manual follow-up across different teams and tools.

The real challenge begins after detection, when classification, review, and enforcement must happen consistently.

OvalEdge approaches PII data discovery through AI-based Data Classification Recommendations embedded within its governance framework.

Instead of separating discovery from classification, it applies machine learning models across defined domains, such as “Privacy,” to identify potential PII elements at scale.

1. AI-based data classification recommendations at scale

The Data Classification Recommendation feature scans structured and unstructured data across connected systems and generates suggested classifications for potential PII.

Rather than automatically labeling fields without oversight, it presents each recommendation to users in a simple Yes or No confirmation workflow.

This human-in-the-loop model reduces manual classification effort while preserving accuracy and governance control. Teams no longer need to review every dataset from scratch. They review targeted, model-driven suggestions instead. This improves consistency across domains and prevents fragmented tagging practices.

2. Automated alerts for continuous compliance

Classification is not static. New fields are added. New data sources are introduced. To address this, OvalEdge generates automatic alerts whenever potential PII is detected.

This supports ongoing compliance rather than periodic review cycles. Privacy and governance teams can respond to changes as they occur instead of discovering new sensitive data during audits.

3. Embedded within broader governance workflows

Discovery findings flow directly into governance processes with OvalEdge. Once PII is confirmed through the recommendation workflow, classifications are applied consistently and become part of the organization’s managed data inventory.

This integration strengthens audit readiness because classification decisions are traceable and reviewable. It also reduces handoffs between security, privacy, and governance teams by keeping detection, review, and classification within a unified environment.

For organizations aiming to mature their PII data discovery capabilities, this approach shifts the focus from static scanning to scalable, AI-assisted classification supported by human validation and continuous monitoring.

4. Access intelligence to connect discovery with entitlement context

Knowing where PII resides is only part of the equation. Organizations also need to know who has access to that data and whether access aligns with policy.

OvalEdge integrates PII data discovery with access intelligence. This enables teams to analyze detected sensitive datasets alongside user entitlements and role-based access controls.

|

For example, if a dataset containing financial identifiers is accessible to a broad group of users, governance and security teams can review whether that access is justified. This supports risk-based access management and aligns with zero-trust principles. |

By linking discovery findings to access context, organizations can prioritize remediation efforts based on both data sensitivity and exposure level.

Treating PII data discovery as an isolated compliance exercise often leads to fragmented processes. Scan results are exported, reviewed manually, and handed off to other teams for action. This increases the risk of delays, inconsistent enforcement, and incomplete reporting.

For organizations seeking to mature their sensitive data discovery capabilities, this integrated model addresses a core challenge: moving from identification to sustained risk management.

Instead of producing static reports, PII data discovery becomes an ongoing, governed process that supports audit readiness, data privacy compliance, and enterprise-wide data security.

Conclusion

Without visibility into personal data, organizations cannot meaningfully enforce access controls, apply masking policies, respond to data subject requests, or manage breach impact. Discovery transforms blind spots into actionable awareness.

If you are evaluating PII discovery software or exploring the best PII data discovery tools, focus on integration, automation, and contextual intelligence. Prioritize platforms that connect discovery to classification, lineage, and governance rather than isolating it as a compliance checkbox.

Ask yourself:

-

Do we know where sensitive data lives across cloud and SaaS environments?

-

Can we detect new exposure in real time?

-

Are discovery outputs connected to enforceable controls?

Sustainable PII visibility does not happen through isolated scans or spreadsheet inventories. It requires a system that continuously identifies sensitive data, classifies it accurately, understands how it moves, and ensures access aligns with policy. When discovery is disconnected from governance, teams are left reconciling reports instead of reducing risk.

This is where an integrated governance platform becomes critical. PII data discovery delivers real impact only when it connects directly to classification workflows, lineage intelligence, and access controls within the same operating framework.

OvalEdge brings PII data discovery into a unified, AI-driven governance platform. Its AI-based data classification recommendations identify sensitive data across structured and unstructured sources at scale, while human validation ensures accuracy and accountability.

Automated alerts surface new exposure as it appears, and built-in governance workflows connect discovery directly to access management, lineage visibility, and compliance reporting.

If audit readiness, continuous privacy compliance, and AI-ready trusted data are priorities, explore how OvalEdge governs sensitive data end to end.

Book a demo to see how AI-driven PII data discovery becomes part of a broader, governed data foundation.

FAQs

1. Can PII data discovery work without full data classification?

PII data discovery can function independently, but results are harder to operationalize. Without classification, teams struggle to prioritize risk, enforce controls, or apply consistent masking and access policies across sensitive data.

2. How often should PII data discovery scans run in enterprise environments?

In dynamic environments, PII discovery should run continuously or on frequent schedules. Periodic scans miss new fields, schema changes, and shadow data introduced between review cycles.

3. Does PII data discovery impact system performance?

Modern PII data discovery tools use lightweight scanning and metadata-driven techniques to minimize performance impact. Poorly designed scans that require full data reads can create latency if not carefully managed.

4. Can PII data discovery identify sensitive data created by AI and analytics teams?

Yes. Advanced automated PII detection solutions can detect sensitive data generated by analytics pipelines, feature stores, and AI training datasets. This helps organizations manage risk from derived or inferred personal data.

5. How does PII data discovery support incident response after a data breach?

PII data discovery accelerates incident response by identifying affected data assets, owners, and locations. This improves reporting accuracy and reduces investigation time.

6. What teams typically own PII data discovery inside large organizations?

Ownership is usually shared between security, privacy, and data governance teams. Security focuses on exposure risk, privacy ensures regulatory alignment, and governance maintains accurate, continuously updated data visibility.

Deep-dive whitepapers on modern data governance and agentic analytics

OvalEdge Recognized as a Leader in Data Governance Solutions

.png?width=1081&height=173&name=Forrester%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

.png?width=1081&height=241&name=KC%20-%20Logo%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

Gartner, Magic Quadrant for Data and Analytics Governance Platforms, January 2025

Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

GARTNER and MAGIC QUADRANT are registered trademarks of Gartner, Inc. and/or its affiliates in the U.S. and internationally and are used herein with permission. All rights reserved.