Table of Contents

Designing Scalable Data Products: Principles and Patterns That Work

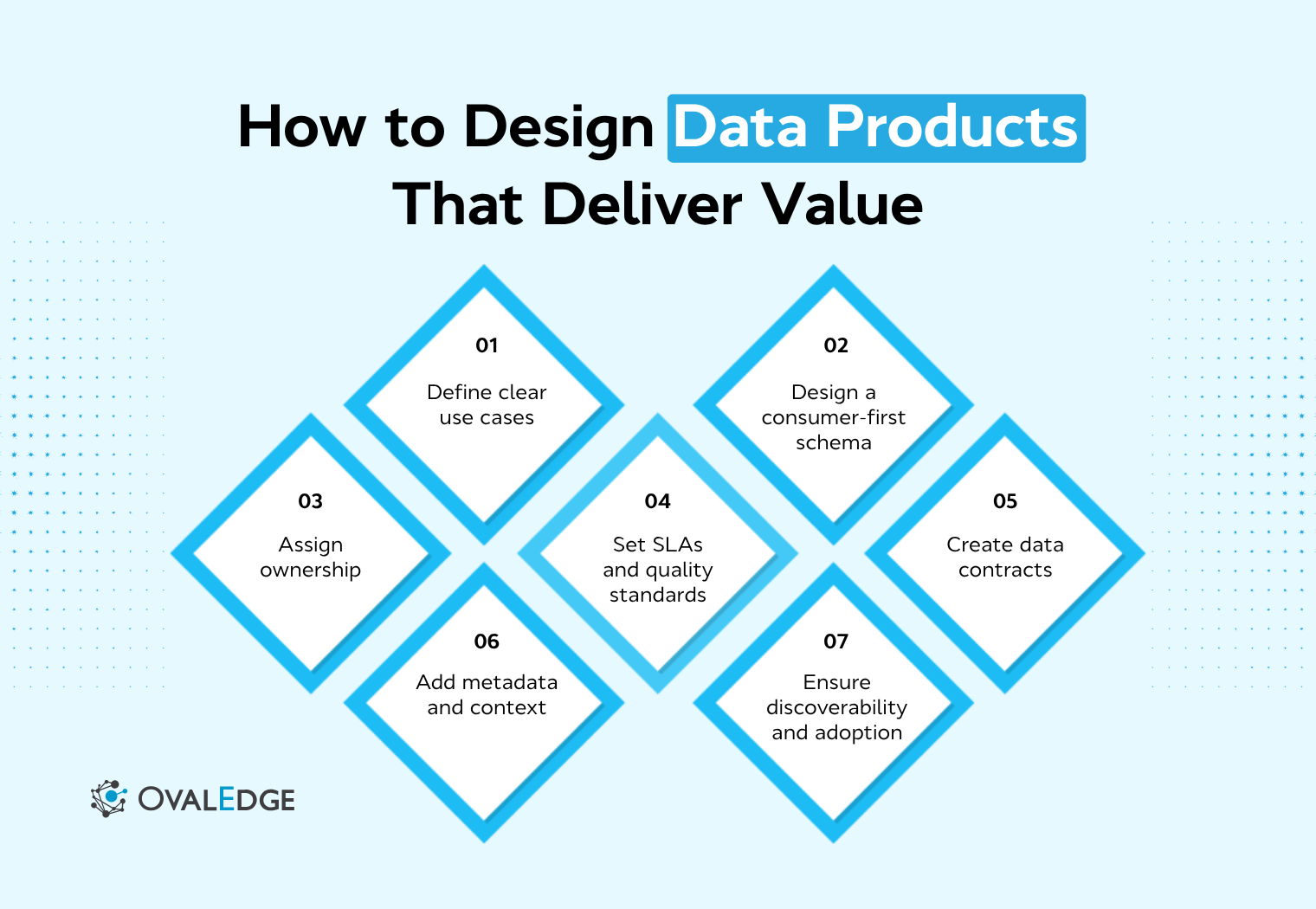

Designing data products upfront shifts focus from pipelines to value delivery. Clear schemas aligned to use cases, defined ownership, formal contracts, measurable SLAs, and rich metadata create trustworthy, reusable assets. Embedding governance and planning discoverability ensures adoption. The guide outlines principles and a step-by-step framework to avoid pitfalls, improve quality, and build scalable ecosystems supporting confident, data-driven decisions.

Teams often invest months building data assets that look complete on paper but still go unused. The issue is rarely the pipeline itself. It is the absence of design decisions before development begins.

Data product design defines how a data product should be structured, owned, and governed before it is built. Without it, teams end up with unclear schemas, missing SLAs, weak ownership, and products that are difficult to use or scale.

This guide focuses on how to design a data product before implementation. It covers the core principles, the key components to define upfront, and a step-by-step framework to create data products that are usable, reliable, and ready for enterprise adoption.

What is data product design?

Data product design is the process of defining a data asset’s structure, ownership, quality expectations, access model, and consumer contracts before it is built, so it can be trusted, reused, and discovered across the organization. It moves teams away from ad hoc datasets and pipeline-first builds toward a more structured approach where data is intentionally designed for consumption from the start. This ensures the output is usable and reliable, not just technically correct.

It differs from data engineering. Engineering builds and operates pipelines, while design defines what those pipelines should produce, who they serve, and the standards they must meet.

Design also sits at the foundation of a data mesh, where domain teams create data products using shared standards before implementation. This alignment improves consistency and reuse. According to an article published by Deloitte in 2024, organizations that treat data as a product with defined ownership, quality standards, and governance see higher reuse and trust across teams.

When design is skipped, schemas reflect source systems, SLAs remain undefined, and contracts are missing, making the data difficult to trust or use consistently.

Core principles of data product design

Strong data product design principles ensure that what gets built is usable, trusted, and reusable across the organization. They shift the focus from delivering pipelines to delivering value for consumers, and they guide every upfront decision before development begins.

1. Design for the consumer, not the producer

A data product should deliberate on how consumers query and use data, not how source systems store it. Schema design, naming, and grain must align with business questions.

For example, a “raw_events” table requires consumers to define logic themselves, while a “daily_active_users” product provides ready-to-use metrics. One requires interpretation. The other enables direct use.

Designing for the consumer reduces dependency on data teams for explanation and makes self-serve analytics genuinely possible.

2. Define ownership and accountability upfront

Every data product needs a named owner, not a team; a specific individual who is accountable for its quality, reliability, and evolution. In enterprise environments, this owner typically sits within the domain that produces the data, aligning with the domain ownership model in data mesh architectures.

Ownership is not just a label. The data product owner makes decisions about schema changes, responds to quality incidents, manages SLA breaches, and communicates with consumers when something breaks. Without a named owner, these responsibilities fall into a gap. Schema changes happen without warning. Quality issues linger without resolution. Consumer trust erodes because there is no clear person to hold accountable or escalate to.

Defining ownership upfront also means defining the operating model, who maintains the pipeline, who reviews changes, and what the escalation path looks like.

3. Treat data as a product with defined expectations

A dataset is passive. A data product makes promises. It commits to a defined structure, a freshness guarantee, an availability target, and a documented interface. These commitments are what allow consumers to build on top of a data product with confidence, knowing what they will receive and when.

This distinction matters because trust is not assumed; it is earned through consistency. Poor data quality costs organizations an average of $12.9 million per year, according to a 2023 IBM research, not because data teams are careless, but because quality expectations were never formally defined in the first place. When expectations are explicit and measurable, quality becomes enforceable rather than aspirational.

4. Standardize for reuse and interoperability

Reusable data products depend on consistent naming conventions, shared business keys, and aligned taxonomies across domains. A “customer_id” that means one thing in the orders domain and something else in the marketing domain cannot be safely joined, which means consumers cannot trust the result of combining those products.

Standardization solves this. When business keys are consistent, when field names follow a shared convention, and when taxonomies are aligned to a common business glossary, products from different domains can be composed without bespoke transformation logic. The compounding effect is significant: each new standardized product becomes easier to integrate, reducing the cost of building new use cases and increasing the value of existing products.

5. Embed governance and quality into the design

Governance and quality that are added after deployment are always reactive. A data product designed with governance built in is governed continuously from the start.

In practice, this means defining completeness thresholds, timeliness requirements, and accuracy checks as part of the design blueprint, not as a post-launch audit. It also means classifying sensitivity: PII, confidential, public, defining retention policies, and setting access tiers before the product is built. When these decisions are embedded in design, they are implemented consistently in the pipeline rather than patched on afterward. Compliance becomes a property of the product, not a periodic review.

What to define before building a data product?

Before any code is written, six design decisions need to be locked in. Together, they form the data product blueprint that determines whether the product will be usable, trusted, and scalable across the organization.

1. Data schema and structure

Schema design is the most fundamental decision in the blueprint. It defines field names, data types, grain level, primary keys, and foreign key relationships, and it should be driven by how consumers will query the data, not by how the source system organizes it.

For a “customer_360” data product, the grain decision alone determines what use cases the product can serve. A grain of one row per customer per day supports time-series segmentation and churn analysis. A grain of one row per customer (current state) supports account-level workflows and support tooling. Both may draw from the same source tables, but they serve fundamentally different consumers. Choosing the wrong grain means consumers either cannot use the product or must reshape it before using it, which defeats the product's purpose entirely.

Schema decisions should be documented in a data catalog or metadata management tool from day one, so definitions are versioned, searchable, and visible to consumers before the product is even live.

2. Ownership and operating model

Every data product must have a clearly defined owner and an operating team responsible for maintaining it. The owner is accountable for quality, SLAs, schema changes, and consumer communication. The operating team, typically an engineering or domain team, handles implementation, monitoring, and incident response.

In enterprise settings, ownership aligns with business domains. The team closest to how the data is created and used is best positioned to own the product, ensure accuracy, and respond quickly when issues arise. In a data mesh model, this domain ownership is structural; it is how the architecture is designed to work.

Defining the operating model also means specifying the decision rights: who can approve a schema change, what the review process looks like, and what happens when an SLA is breached.

3. Data contracts and interfaces

A data contract formalizes what consumers can expect from a data product. It defines schema, field-level semantics, change policies, and ownership, creating a stable interface between producers and consumers.

|

Contract Element |

Description |

Why It Matters |

|

Schema version |

Semantic version of the current schema |

Consumers know when a breaking change has occurred |

|

Field definitions |

Name, type, description, nullability |

Eliminates ambiguity about what each field means |

|

Change policy |

Notice period for breaking vs. non-breaking changes |

Consumers get time to adapt before changes go live |

|

Owner contact |

Data product owner and escalation path |

Consumers know who to reach when something breaks |

The contract is reviewed with at least one target consumer before it is finalized. This review often surfaces mismatches between what the producer assumed consumers need and what they actually use, like catching schema, naming, or granularity issues before they are built in.

4. SLAs and quality expectations

SLAs translate quality expectations into measurable, enforceable commitments. Three dimensions should be defined for every data product:

-

Freshness: How recently must the data have been updated? For example, data must reflect events that occurred within 2 hours.

-

Availability: What uptime is the product expected to maintain? For example: 99.5% availability during business hours.

-

Completeness: What null or missing value rate is acceptable? For example, no more than 0.1% null values in required fields such as “customer_id” or “order_date”.

These definitions are not just documentation; they become the specification for automated quality checks embedded in the pipeline. When SLAs are defined at design time, monitoring can be configured before the product goes live rather than added later when issues have already eroded consumer trust.

5. Metadata and business context

Metadata provides the context a consumer needs to understand and trust a data product. It is what transforms a schema into a data product that a consumer can understand without outside help. It includes:

-

Business glossary alignment: What does “active_customer” mean in this product? Does it match the organization's official definition?

-

Lineage: What upstream sources feed this product? If source data changes, which products are affected?

-

Sensitivity classification: Which fields contain PII, confidential data, or data subject to regulatory requirements?

Metadata management platforms centralize this context, making it searchable and governable across teams. When metadata is defined at design time and registered in a platform like OvalEdge, it travels with the product, and consumers always have access to the context they need, regardless of when they discover the product or who originally built it.

6. Access and discoverability model

The access model defines who can use the product and how. This is a design decision and not a deployment detail. It includes:

-

Interface options: Will consumers access it via SQL, a REST API, a file export, or a BI tool connection? Different consumer personas have different needs, analysts query via SQL, applications consume via API, and executives use prebuilt reports.

-

Access tiers: Is this product open to all internal users, restricted to specific teams, or governed by a request-and-approval workflow?

-

Catalog registration: Where will the product be published: a data catalog, an internal data marketplace, or a specific tool?

Defining the access model upfront means the product is built with the right interfaces from the start, rather than retrofitting access patterns after consumers have already run into friction.

Step-by-step framework to design a data product

This framework translates the blueprint decisions above into a structured, repeatable process. Each step builds on the previous one, moving from validating the need to ensuring the product can be found and used from day one.

Step 1: Identify and validate the use case

Every data product design starts with a consumer and a specific decision or workflow that the product needs to support. The goal at this step is not to define what data exists; it is to confirm that a real, recurring business need justifies the investment in a formal data product.

A use-case card is a simple tool for doing this validation. It captures four things:

|

Field |

Example |

|

Consumer persona |

Marketing analyst — campaign performance team |

|

Decision or workflow supported |

Weekly channel attribution for budget allocation |

|

Frequency of use |

Weekly, with ad hoc queries during campaign cycles |

|

Current workaround |

Manual pull from three separate reports, reconciled in a spreadsheet |

If the use case is recurring and currently handled through manual workarounds, it is a strong candidate for a data product. Working backward from use cases prevents over-engineering and ensures relevance.

Step 2: Define the data product boundary

A clear boundary defines what the product includes, what it excludes, and where it starts and ends relative to other products. This decision directly affects ownership, contract scope, and consumer clarity.

A useful test: can this product deliver value to at least one consumer without requiring a join with another data product? If the answer is no, the boundary is probably too narrow.

Consider the difference between “order_summary” and “order_line_items”. The “order_summary” product serves consumers who need order-level metrics like total value, status, and fulfillment date. The “order_line_items” product serves consumers analyzing SKU-level patterns, margins, or returns. They share source data but serve different use cases, different consumers, and different SLAs.

Combining them into one product makes ownership harder, schema design more complex, and consumer contracts more ambiguous. Separating them keeps each product focused and independently useful.

Step 3: Design the schema and data model

With the use case validated and the boundary defined, schema design becomes a translation exercise: how does the consumer's question map to a set of fields, a grain, and a set of keys?

Concrete decisions to make at this step:

-

Grain: What does one row represent? One row per customer per day? One row per order? One row per session?

-

Primary key: What uniquely identifies a row? Is it a natural key from the source, a surrogate key, or a composite key?

-

Field naming: Are field names drawn from the business glossary? Does “revenue” mean gross or net? Is “customer_id” the CRM ID, the billing ID, or an internal identifier?

-

Nullability: Which fields are required? Which are optional? What does a null mean in this context: missing data, not applicable, or unknown?

Each of these decisions should be documented in a data catalog before the build begins. This serves two purposes: it forces precision during design, and it gives consumers a reference point before the product is live.

Step 4: Define ownership and governance workflows

Ownership defined in the blueprint now gets operationalized. This step turns the ownership model into a set of working processes:

-

Schema change approval: Who reviews proposed schema changes? What is the review timeline? What constitutes a breaking change that requires consumer notification?

-

Quality incident response: Who is notified when an SLA is breached? What is the response SLA for acknowledgment and resolution?

-

Consumer communication: How are consumers notified of upcoming changes via a data catalog changelog, an email, a Slack message to a dedicated channel?

In enterprise environments, these workflows should align with domain boundaries. The domain team that owns the data product is closest to both the source and the consumers; they are best positioned to make fast, informed decisions when something needs to change.

Step 5: Establish the data contract

The data contract is the formal commitment that makes a data product trustworthy. It takes the schema, SLA, and ownership decisions from earlier steps and packages them into a versioned document that both producer and consumer sign off on.

Before finalizing the contract, review it with at least one target consumer. During this review, ask:

-

Does the schema match how you would actually query this product?

-

Are any field names ambiguous or inconsistent with how you use these terms elsewhere?

-

Is the freshness SLA sufficient for your use case?

-

Are there fields you expected to be here that are missing?

This review often surfaces critical mismatches early, a grain that does not support the consumer's primary query, a field name that conflicts with the business glossary, or an SLA that is too loose for a real-time dashboard. Catching these at contract review costs an hour. Catching them after the build costs a sprint.

Once finalized, the contract is versioned and published alongside the schema in the data catalog, so it serves as a living reference for both producers and consumers.

Step 6: Define SLAs and quality checks

The SLA values defined in the blueprint now need to be operationalized into monitoring and alerting. This step converts design decisions into enforcement mechanisms:

-

Freshness check: A pipeline test that fails if the most recent record timestamp is older than the defined freshness window. For example, a dbt test that alerts if “max(updated_at)” is more than 2 hours behind the current time.

-

Completeness check: A row-level test that flags records where required fields: “customer_id”, “order_date”, and “revenue” are null beyond the defined threshold.

-

Availability monitoring: An uptime check on the data product's query interface or API endpoint, alerting if availability drops below the SLA target.

Tools like dbt tests, Great Expectations, and Monte Carlo can implement these checks in the pipeline directly. The key principle is that SLA enforcement is configured before the product goes live and not added reactively after the first consumer complaint.

Step 7: Plan for discoverability and consumer onboarding

A data product that cannot be found is a data product that will not be used. This step ensures that discoverability and onboarding are built into the product at design time, not added as an afterthought after launch.

The 2026 Pulse of Change report published by Accenture says, “only 32% of organizations can effectively scale data-driven value, and the gap is almost always in accessibility, usability, and governance, not in technical quality. Discoverability and onboarding clarity are what close that gap, which is why they must be designed upfront rather than treated as post-launch tasks.

Catalog registration: Register the product with a complete metadata record before it goes live, including domain, subject area, owner, upstream lineage, sensitivity classification, and access method. Incomplete metadata entries are one of the most common reasons consumers skip a product in favor of rebuilding their own.

Consumer-facing documentation: Write a README that answers the five questions every new consumer asks:

|

Question |

What to include |

|

What is this product for? |

Business purpose, primary use cases, consumer personas |

|

What does it contain? |

Key fields, grain, time range, and known limitations |

|

How do I access it? |

Interface (SQL/API/export), access request process, example query |

|

How fresh is it? |

Freshness SLA, last updated timestamp, update schedule |

|

Who do I contact? |

Data product owner name and contact method |

Onboarding path: Define whether new consumers access the product through a self-serve model: catalog discovery → access request → immediate access, or a guided model: consumer contacts the owner → scoping call → onboarding session. The right model depends on the product's complexity and the consumer's technical sophistication.

Designing data products for usability and adoption

A data product can meet every technical requirement, be properly contracted, clearly owned, SLA-backed, but still go unused if consumers cannot find it, understand it, or trust it. Usability is a design concern, not a post-launch enhancement. These three areas determine whether a technically sound product actually gets adopted.

1. Making data products easy to find

Discoverability starts with clear tagging and structured metadata. Domain labels, subject areas, consumer personas, and sensitivity tags help users quickly identify whether a data product is relevant to their needs.

Descriptions in the data catalog should use business-friendly language rather than source system terminology. Integrating a business glossary adds context, making it easier for non-technical users to interpret and trust what they are accessing.

2. Making data products easy to understand

Findability gets consumers to the product. Clarity determines whether they stay. Three elements make the difference:

Field-level descriptions should explain meaning in plain business terms. “is_churned” should say "True if the customer has had no activity in the past 90 days as of the snapshot_date," not "boolean flag from source." The description should answer the question a consumer would ask before using the field.

Sample datasets and example queries reduce the time to first value. A consumer who can see what the data looks like and run a working query in the first five minutes is far more likely to adopt the product than one who must explore the schema manually.

Trust signals a "last certified" date, a visible data quality score, and a link to the data contract to reduce the hesitation that stops consumers from building on an unfamiliar product. Certification means a named owner has reviewed and confirmed the product meets its defined standards. Without that signal, consumers default to the safe option: rebuilding from scratch using sources they already know.

3. Driving adoption through standardization

Standardization creates consistency across data products. When contract structures, SLA formats, and metadata schemas follow the same pattern, users know what to expect regardless of the domain.

This consistency compounds over time. Each well-designed product reinforces trust in others, making reuse easier and scaling adoption across the organization.

Common data product design mistakes to avoid

Even well-intentioned teams miss critical design decisions that impact trust and adoption. These are not technical gaps but design failures that determine whether a data product gets used or ignored.

1. Designing for the producer, not the consumer

Many data products mirror the structure of source systems instead of reflecting how consumers use the data. This forces analysts and business users to spend time understanding schemas rather than using them.

Consumers either abandon the product or rebuild logic on top of it, which leads to inconsistent definitions and duplicated effort across teams.

2. Skipping the data contract

Without a formal data contract, there is no shared agreement on schema, semantics, or change policies.

The first schema change can silently break downstream use cases, often without clear ownership or communication. This erodes confidence and discourages reuse, especially in enterprise environments where dependencies are complex.

3. Leaving ownership undefined

When ownership is unclear, no one is accountable for quality issues, schema changes, or SLA breaches.

Without a defined owner, issues remain unresolved longer, and trust declines over time. Clear ownership is essential for maintaining reliability and ensuring that data products evolve in line with business needs.

4. Building without registering

A data product that is not registered in a data catalog is effectively invisible. Consumers cannot find an unregistered product; as a result, they often rebuild it. This leads to duplication and inconsistent metrics.

A 2024 Gartner press release indicates that 80% of data and analytics governance initiatives will fail by 2027, often due to these exact gaps: products that are built but not governed, registered, or made discoverable.

Registration is not optional, and it is not a post-launch task. It is a step in the design process, completed before the product goes live.

5. Treating SLAs as aspirational rather than contractual

Without clear, measurable thresholds, reliability becomes subjective and difficult to enforce.

SLAs need to be specific, monitored, and tied to alerting mechanisms. When treated as contractual commitments rather than informal expectations, they create a foundation for trust and consistent usage across teams.

Conclusion

Usability and adoption are determined by design decisions made before the first row is written. When schema, ownership, contracts, SLAs, and metadata are clearly defined upfront, data products become reliable and reusable across the organization.

The shift from delivering datasets to designing data products is both operational and cultural. It requires standardization, clear ownership, and measurable expectations to ensure consistency and scalability across domains.

Strong data products are built on clear foundations. Consumer-aligned schema, defined ownership, formal contracts, enforceable SLAs, and discoverability are what drive trust and adoption.

Once designed and built, the focus shifts to lifecycle management, including monitoring, governance, and continuous improvement.

Platforms like OvalEdge help operationalize data product design through metadata management, data catalogs, lineage, and governance workflows.

Book a demo with OvalEdge to implement scalable, trusted data product design across your enterprise.

FAQs

1. What should be defined before building a data product?

Before building, teams must define schema, ownership, data contracts, SLAs, metadata, business context, and access models. These decisions create a clear blueprint that ensures the data product is reliable, discoverable, and usable across different teams and use cases.

2. Who is responsible for data product design?

A named data product owner is responsible for design decisions, including schema, contracts, and SLAs. This individual ensures accountability, manages changes, and aligns the product with business needs, typically within the domain that produces and uses the data.

3. What is a data contract in data product design?

A data contract is a formal agreement between producers and consumers that defines schema, field meaning, and change policies. It ensures stability, prevents unexpected breakages, and allows consumers to build downstream systems with confidence and predictable behavior.

4. How do SLAs improve data product reliability?

SLAs define measurable expectations for freshness, availability, and completeness. They help consumers rely on the data product for decision-making and create accountability through monitoring and alerts when performance or quality thresholds are not met.

5. How does data mesh influence data product design?

Data mesh promotes domain ownership of data products, making design decisions such as schema, contracts, and SLAs standardized across teams. This approach improves interoperability, scalability, and accountability, ensuring data products can be reused effectively across the organization.

6. What tools support data product design and metadata management?

Data catalogs and metadata platforms like OvalEdge help document schemas, manage lineage, and align business context. Tools such as dbt, Great Expectations, and Monte Carlo support testing, monitoring, and enforcing quality and SLA commitments defined during design.

Deep-dive whitepapers on modern data governance and agentic analytics

OvalEdge Recognized as a Leader in Data Governance Solutions

.png?width=1081&height=173&name=Forrester%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

.png?width=1081&height=241&name=KC%20-%20Logo%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

Gartner, Magic Quadrant for Data and Analytics Governance Platforms, January 2025

Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

GARTNER and MAGIC QUADRANT are registered trademarks of Gartner, Inc. and/or its affiliates in the U.S. and internationally and are used herein with permission. All rights reserved.