Table of Contents

The 10 Best Data Masking Practices for Scalable and Compliant Data Protection

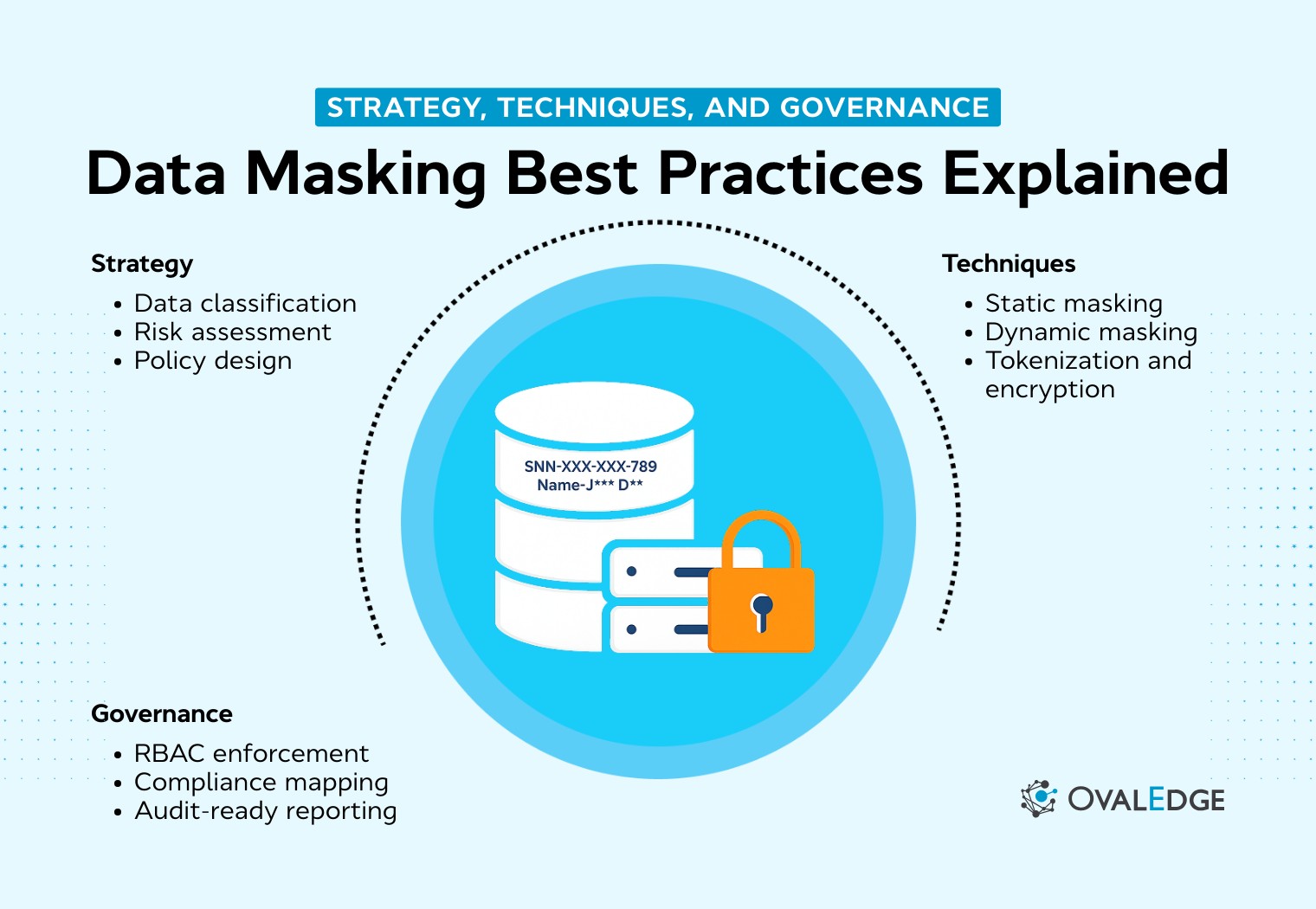

Data masking is no longer optional in modern enterprises where sensitive data moves across production and non-production systems. This guide outlines 10 operational best practices to help organizations implement scalable, policy-driven masking controls without disrupting business performance. It covers static and dynamic masking, referential integrity preservation, least privilege alignment, and audit-ready governance. The article also highlights common masking mistakes and explains how centralized platforms can operationalize discovery-driven protection. By embedding masking into governance workflows, organizations reduce exposure, strengthen compliance, and maintain data usability at scale.

The release was on track until someone realized the QA database contained live customer records, including full names, emails, and payment details. What started as a routine refresh quickly turned into a compliance review, internal escalation, and a scramble to document controls that should have been in place from the beginning.

Situations like this are more common than most organizations admit. The 2024 Verizon Data Breach Investigations Report highlights that the human element remains involved in the majority of breaches, underscoring how internal access and data handling practices create measurable risk.

At the same time, businesses cannot slow down. Developers need production-like datasets to test features accurately. Analytics teams require consistent identifiers. Compliance teams expect provable safeguards across every environment.

This operational tension is exactly where data masking best practices become essential. When structured correctly, masking reduces exposure, supports compliance, and preserves data usability.

In this guide, you will learn how to implement scalable masking controls that protect sensitive data without disrupting performance, governance, or innovation.

TL;DR

|

Here are 10 operational data masking best practices for Scalable Protection

|

When organizations need data masking

In this section, we examine the operational and compliance-driven scenarios where structured data masking controls become essential. Masking is not just a technical enhancement. It becomes a foundational safeguard when sensitive data moves across systems, teams, and environments.

Organizations typically require data masking in four core situations.

Non-production data protection

Non-production environments consistently introduce exposure risk. Development, testing, staging, and QA systems often mirror production databases because teams need realistic data to validate functionality.

The problem is that these environments rarely maintain the same access controls, monitoring standards, or governance discipline as production systems.

Common risk patterns include:

-

Full production database copies shared with vendors or contractors

-

Test environments that lack strict access governance

-

Replicated datasets stored across multiple sandboxes

The consequences are significant. Unauthorized personnel may gain access to real PII. Regulatory obligations may be violated simply because sensitive data was unnecessarily replicated.

In this context, structured masking becomes critical. Organizations typically apply:

-

Static data masking for test data management

-

Obfuscation techniques that preserve referential integrity while protecting sensitive fields

This approach ensures non-production data remains usable without exposing real identities.

|

Related reading: Best Data Masking Tools for Secure Data in 2026 explains why data masking is essential for protecting sensitive information while keeping data usable for testing and analytics. |

Production access control

Masking is not limited to non-production environments. Even in live systems, not every user requires full visibility of sensitive information.

Role-Based Access Control creates differentiated access levels. For example:

-

Customer support teams may need partial identifiers for verification

-

Finance teams may require masked account numbers for reconciliation

-

Operations staff may need contextual data without viewing full personal records

Dynamic masking enables real-time enforcement. Data is masked at query time based on the requesting user’s role, attributes, or access context.

Operational benefits include:

-

Context-aware masking rules

-

On-demand redaction of specific fields

-

Conditional visibility aligned with job responsibilities

This ensures production usability while minimizing unnecessary exposure.

|

Also read: How to Ensure Data Privacy Compliance – This OvalEdge whitepaper explains how role-based access controls, policy enforcement, and data governance frameworks support secure production environments and regulatory compliance. |

Regulatory compliance requirements

Regulatory frameworks explicitly require safeguards for sensitive data. Organizations handling personal, financial, or health information must demonstrate technical and operational controls that limit unnecessary exposure.

|

Regulation |

Core requirement |

What does it mean for masking |

|

GDPR |

Data minimization and appropriate technical protections |

Sensitive personal data should be masked in non-production and restricted in production unless strictly required |

|

HIPAA |

Protection of protected health information (PHI) |

Health data must be masked or restricted to authorized personnel only |

|

CCPA |

Consumer data protection obligations |

Organizations must prevent unauthorized access and overexposure of consumer information |

Compliance expectations typically include:

-

Masking sensitive data in non-production environments

-

Restricting unnecessary exposure in production systems

-

Demonstrating provable access controls during audits

Failure to implement structured masking controls increases regulatory risk. Audit penalties, legal consequences, and reputational damage often follow inadequate safeguards.

Data masking plays a direct role in supporting defensible compliance by reducing exposure while maintaining operational continuity.

Insider risk and privilege abuse

Internal threats frequently arise from over-privileged access rather than malicious intent. When users are granted more visibility than required, risk increases.

Common exposure patterns include:

-

Excessive access permissions

-

Weak segregation of duties

-

Accessing full customer records without a business necessity

Masking acts as a mitigation layer. It reduces visibility at the field level, even when broader system access exists.

Effective controls include:

-

RBAC-based masking

-

Conditional data visibility

-

Access monitoring paired with masked outputs

By limiting exposure to only what is necessary, organizations reduce insider risk while preserving operational functionality.

10 operational data masking best practices for scalable protection

Effective data masking goes beyond obscuring sensitive fields. It requires a structured operating model that balances security, compliance, and usability across production and non-production systems.

The following best practices provide a scalable framework for implementing masking without disrupting business operations.

1. Discover and classify sensitive data before masking

Masking starts with visibility. You cannot protect what you have not identified.

Organizations should first discover sensitive fields across structured databases, warehouses, and even semi-structured repositories. Classification should then group data based on regulatory impact, financial exposure, and business sensitivity.

Prioritization matters. Not every column carries equal risk. Focus on:

-

Personally identifiable information

-

Financial identifiers

-

Health or regulated records

-

Authentication and credential data

|

Do you know: OvalEdge supports this discovery-driven approach by linking business glossary terms to physical data assets. When sensitive terms are defined at the governance layer, masking policies can be applied consistently across associated columns. |

2. Apply static masking to non-production data

Non-production environments represent one of the largest exposure surfaces in modern enterprises. Static masking permanently transforms sensitive data before it is copied to development, testing, or staging systems.

Key implementation principles include:

-

Mask before copying, not after replication

-

Ensure transformations are irreversible

-

Maintain realistic formats for testing accuracy

-

Automate masking during environment refresh cycles

Static masking should preserve functional integrity. Applications must behave the same way after masking. If test environments break, teams will bypass controls.

At scale, governance platforms that centralize policy definitions help ensure static masking rules are applied consistently across refresh workflows.

3. Implement dynamic masking for role-based visibility

Even in production, not every user requires full data visibility. Dynamic masking applies protection at query time based on roles, attributes, or contextual policies.

Operational best practices include:

-

Mapping masking rules directly to role-based access control

-

Allowing partial visibility where business workflows require verification

-

Monitoring role changes and entitlement updates

-

Minimizing disruption to production performance

This approach reduces insider risk while maintaining operational continuity. It is especially valuable in customer service, finance, and analytics functions where partial identifiers are sufficient.

Column-level security

|

OvalEdge enables column-level security policies that align masking logic with user roles. By centralizing Column Security Policies, organizations gain visibility into how sensitive fields are exposed across departments. |

4. Preserve referential integrity across related datasets

One of the most common masking failures occurs when relationships between tables are broken. Referential integrity must be preserved to ensure joins, constraints, and downstream reporting remain accurate.

Best practices include:

-

Maintaining consistency between primary and foreign keys

-

Using deterministic masking where consistency is required

-

Validating relationships after transformation

-

Testing dependent systems and reporting layers

Deterministic masking ensures identical input values generate the same masked output, which supports analytics and operational workflows without exposing raw identifiers.

5. Avoid over-masking that reduces data utility

Security controls should not cripple usability. Over-masking often drives shadow data practices, where teams extract ungoverned copies simply to complete their work.

To strike the right balance:

-

Mask only fields classified as sensitive

-

Define clear thresholds for partial versus full masking

-

Engage business stakeholders in decision-making

- Preserve analytical structure and statistical distribution

The goal is to protect identity while maintaining data realism and business value.

6. Align masking policies with least privilege principles

Masking should reinforce your least privileged model, not operate independently of it. When masking is disconnected from access control, organizations either overexpose sensitive data or create operational friction that drives workarounds.

Strong alignment requires:

-

Mapping masking rules directly to access control frameworks

-

Limiting visibility to job-specific requirements

-

Reviewing user roles and entitlements regularly

-

Enforcing separation of duties across critical functions

Centrally governed masking policies significantly improve oversight compared to manual or system-by-system implementations. A structured governance layer, such as the Business Glossary in OvalEdge, allows organizations to define sensitive terms once and associate them consistently with physical data assets across systems.

These governance definitions can then be enforced through OvalEdge Data Security, where column-level masking rules are applied based on user roles and access policies.

This centralized enforcement model reduces inconsistent application of controls and ensures masking remains tightly aligned with least privilege principles across departments.

7. Test masked data thoroughly before release

Masked data must be validated before release into downstream environments. Without testing, subtle issues can undermine analytics or system performance.

Testing should include:

-

Functional validation of applications

-

Reporting accuracy checks

-

Performance benchmarking

-

Verification against internal security standards

Testing ensures masking enhances protection without degrading reliability.

8. Continuously monitor and review masking policies

Data environments evolve constantly. New columns appear. Schemas change. Regulations update. Without ongoing oversight, masking policies drift.

Continuous review should include:

-

Auditing masking coverage across systems

-

Detecting newly introduced sensitive fields

-

Updating policies after structural changes

-

Tracking exceptions and remediation timelines

Centralized governance dashboards improve visibility across hybrid and multi-cloud environments. Maintaining oversight prevents control gaps from emerging over time.

9. Document masking rules for audit and compliance

Documentation transforms masking from a technical feature into a defensible control. Organizations should maintain:

-

Centralized records of masking logic

-

Policy ownership and approval history

-

Evidence for audit trails

- Alignment between governance standards and technical enforcement

Unified governance for audit readiness

|

OvalEdge supports documentation through its unified governance layer, where business glossary definitions, data lineage, and column security policies can be viewed together. This strengthens audit readiness by linking policy intent to actual implementation. |

10. Use deterministic masking where consistency is required

Certain use cases demand stable identifiers across environments and systems. Deterministic masking ensures the same input always generates the same masked output.

This approach supports:

-

Cross-system joins

-

Analytics that depend on consistent identifiers

-

Replicated datasets across environments

-

Controlled integration with downstream applications

However, deterministic methods must be implemented with strong key management to reduce re-identification risk.

Common data masking mistakes to avoid

Even strong masking strategies fail when execution is inconsistent. These mistakes frequently weaken otherwise solid controls.

-

Ignoring non-production environments: Masking production while leaving test or staging copies unprotected creates significant exposure. Replicated datasets often carry the same sensitive data with weaker safeguards.

-

Breaking referential integrity: Inconsistent masking across related tables disrupts joins and reporting accuracy. When identifiers do not align, teams lose trust in masked data.

-

Using weak or reversible techniques: Poor masking methods increase re-identification risk. If masked values can be inferred, the protection is ineffective.

-

Misaligning masking with RBAC: Masking that does not align with role-based access control either overexposes data or blocks legitimate work. Least privilege must guide visibility.

-

Treating masking as one-Time: Data ecosystems constantly change. Without periodic review, masking policies drift, and gaps emerge.

How modern platforms operationalize data masking

Modern governance platforms transform data masking from isolated scripts into a scalable operating model. Instead of managing controls system by system, organizations connect discovery, classification, policy definition, and enforcement into a unified framework.

The objective is consistency. Masking should not depend on individual teams or manual processes. It should operate as a governed, repeatable control embedded into enterprise workflows.

Connecting data discovery to automated masking workflows

Scalable masking begins with discovery.

In a mature operating model:

-

Discovery and classification tags directly inform masking decisions

-

Sensitive fields are automatically identified and labeled

-

Policies trigger masking actions in the appropriate environment

When discovery feeds policy engines, masking becomes proactive rather than reactive. New sensitive fields can inherit predefined policies instead of waiting for manual intervention.

This integration reduces human error and ensures that protection scales as data environments expand.

Centralizing masking governance across cloud and on-prem systems

Fragmentation is one of the largest barriers to effective masking. Different business units often operate across multiple platforms, clouds, and legacy systems.

Centralized governance enables organizations to:

-

Enforce consistent masking policies across environments

-

Maintain unified dashboards for coverage, exceptions, and compliance status

- Monitor data movement across hybrid and multi-cloud infrastructures

A centralized model prevents policy drift and ensures uniform enforcement across departments and technologies.

Supporting secure test data management at scale

Non-production environments require structured oversight as data volumes grow.

At scale, modern platforms enable:

-

Automated masking during refresh and provisioning cycles

-

Deterministic masking to maintain stable identifiers across systems

- Reduced manual approvals and security escalations

This approach allows development and analytics teams to work with realistic datasets while minimizing exposure. By embedding masking directly into environment provisioning workflows, organizations protect sensitive data without slowing delivery timelines.

Enabling policy-driven masking with OvalEdge

Operationalizing masking requires more than isolated configurations. It demands centralized governance, standardized masking schemes, and role-aligned enforcement.

OvalEdge provides a structured approach to policy-driven masking through configurable security controls and unified visibility.

- A practical starting point is configurable masking schemes.

|

As outlined in OvalEdge Security Settings, predefined options such as “mask all,” “mask alphanumeric,” “show first 4,” “show last 4,” and “show blank” allow organizations to standardize how sensitive fields are displayed. This ensures consistency across systems instead of relying on manual transformations. |

-

Standardization, however, must be supported by governance. Masking policies can also be governed through structured metadata.

|

According to OvalEdge Data Security, term-based policies defined within the business glossary can be associated with physical columns. This linkage connects classification directly to enforcement, reducing the risk of inconsistent application across business units. |

-

Enforcement is further strengthened through role-based controls.

|

As described in Introduction to Security, access and masking policies align with user roles, reinforcing least privilege principles rather than ad hoc permissions. |

The operational value of this model is consistency. Centralized policy definition reduces one-off scripts, minimizes enforcement gaps between teams, and strengthens audit evidence by linking governance definitions to technical controls.

Conclusion

Strong data masking is a governance discipline, not just a technical control. It succeeds when teams adopt a structured operating model that begins with discovery and classification, applies appropriate masking methods across environments, preserves usability, and validates controls through monitoring and documentation.

Evaluate whether sensitive data is clearly identified in both production and non-production systems, whether masking rules remain consistent across business units, and whether audit evidence demonstrates ongoing enforcement and review.

The next steps are straightforward. Assess masking coverage, address governance gaps, and implement automated oversight. OvalEdge supports discovery-driven masking and centralized governance controls that strengthen consistency and audit readiness.

Book a demo with OvalEdge to see how a unified governance platform can operationalize secure, scalable, and policy-driven data masking across your enterprise.

FAQs

1. How do data masking best practices differ from data encryption?

Data masking alters data to limit exposure in specific contexts such as testing or role-based access. Encryption protects data at rest or in transit. Masking prioritizes usability with protection, while encryption focuses on secure storage and transmission.

2. What industries require strict data masking compliance?

Industries handling regulated data require strong masking controls. Healthcare must protect patient data under HIPAA. Financial services must secure account information. Retail and technology firms must safeguard customer data under GDPR and CCPA obligations.

3. How do data masking best practices support DevOps workflows?

Data masking enables secure, production-like datasets for development and testing without exposing sensitive information. It supports faster release cycles by allowing teams to work with realistic data while maintaining compliance and internal security standards.

4. Can data masking impact analytic accuracy?

Improper masking can distort datasets if techniques alter key relationships or statistical patterns. Strong masking practices preserve referential integrity and analytical structure so reporting, forecasting, and machine learning models remain reliable.

5. What are the key metrics to evaluate data masking effectiveness?

Organizations should measure masking coverage across sensitive fields, role-based access accuracy, compliance alignment, performance impact, and frequency of policy violations. Monitoring these metrics ensures masking controls remain effective and scalable.

6. How often should organizations review their data masking policies?

Organizations should review masking policies whenever new systems, data sources, or regulations emerge. Regular quarterly or biannual assessments help ensure masking rules remain aligned with evolving schemas, access roles, and compliance requirements.

Deep-dive whitepapers on modern data governance and agentic analytics

OvalEdge Recognized as a Leader in Data Governance Solutions

.png?width=1081&height=173&name=Forrester%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

.png?width=1081&height=241&name=KC%20-%20Logo%201%20(1).png)

“Reference customers have repeatedly mentioned the great customer service they receive along with the support for their custom requirements, facilitating time to value. OvalEdge fits well with organizations prioritizing business user empowerment within their data governance strategy.”

Gartner, Magic Quadrant for Data and Analytics Governance Platforms, January 2025

Gartner does not endorse any vendor, product or service depicted in its research publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

GARTNER and MAGIC QUADRANT are registered trademarks of Gartner, Inc. and/or its affiliates in the U.S. and internationally and are used herein with permission. All rights reserved.